Normal people should not know names like “US-East-1”, someone said this week on Mastodon, or more or less. “US-East-1” is the section of Amazon Web Services that went out last month to widely disruptive effect. What this social media poster was getting at, while contemplating this week’s Cloudflare outage, is the fact that the series of recent Internet-related outages has made network nodes that were previously only known to technical specialists into household names.

For the history-minded, there was a moment like this in 1988, when a badly-written worm put the Internet on newspapers’ front pages for the first time. The Internet was then so little known that every story had to explain what it was – primarily, then, a network connecting government, academic, and corporate scientific research institutions. Now, stories are explaining network architecture. I guess that’s progress?

Much less detailed knowledge was needed to understand what happened on Tuesday, when Cloudflare went down, taking with it access to Spotify, Uber, Grindr, Ikea, Microsoft CoPilot, Politico, and even, in London, its VPN service (says Wikipedia). Cloudflare offers content delivery and protection against distributed denial of service attacks, and as such it interpolates itself into all sorts of Internet interactions. I often see it demanding action to prove I’m not a robot; in that mode it’s hard to miss. That said, many sites really do need the protection it offers against large-scale attacks. Attacks at scale require defense at scale.

Ironically, one of the sites lost in the Cloudflare outage was DownDetector, a site that helps you know if the site you can’t reach is down for everyone or just you, one of several such debugging tools for figuring out who needs to fix what.

So, Cloudflare was Tuesday. Amazon’s outage was just about a month ago, on October 20. Microsoft Azure, another DNS error, was just a week later. All three of these had effects across large parts of the network.

Is this a trend or just a random coincidental cluster in a sea of possibilities?

One thing that’s dispiriting about these outages is that so often the causes are traceable to issues that have been well-understood for years. With Amazon it was a DNS error. Microsoft also had a DNS issue “following an inadvertent configuration change”. Cloudflare’s issue may have been less predictable; The Verge reports its problem was a software crash caused by a “feature file” used by its bot management system abruptly doubling in size, taking it above the size the software was designed to handle.

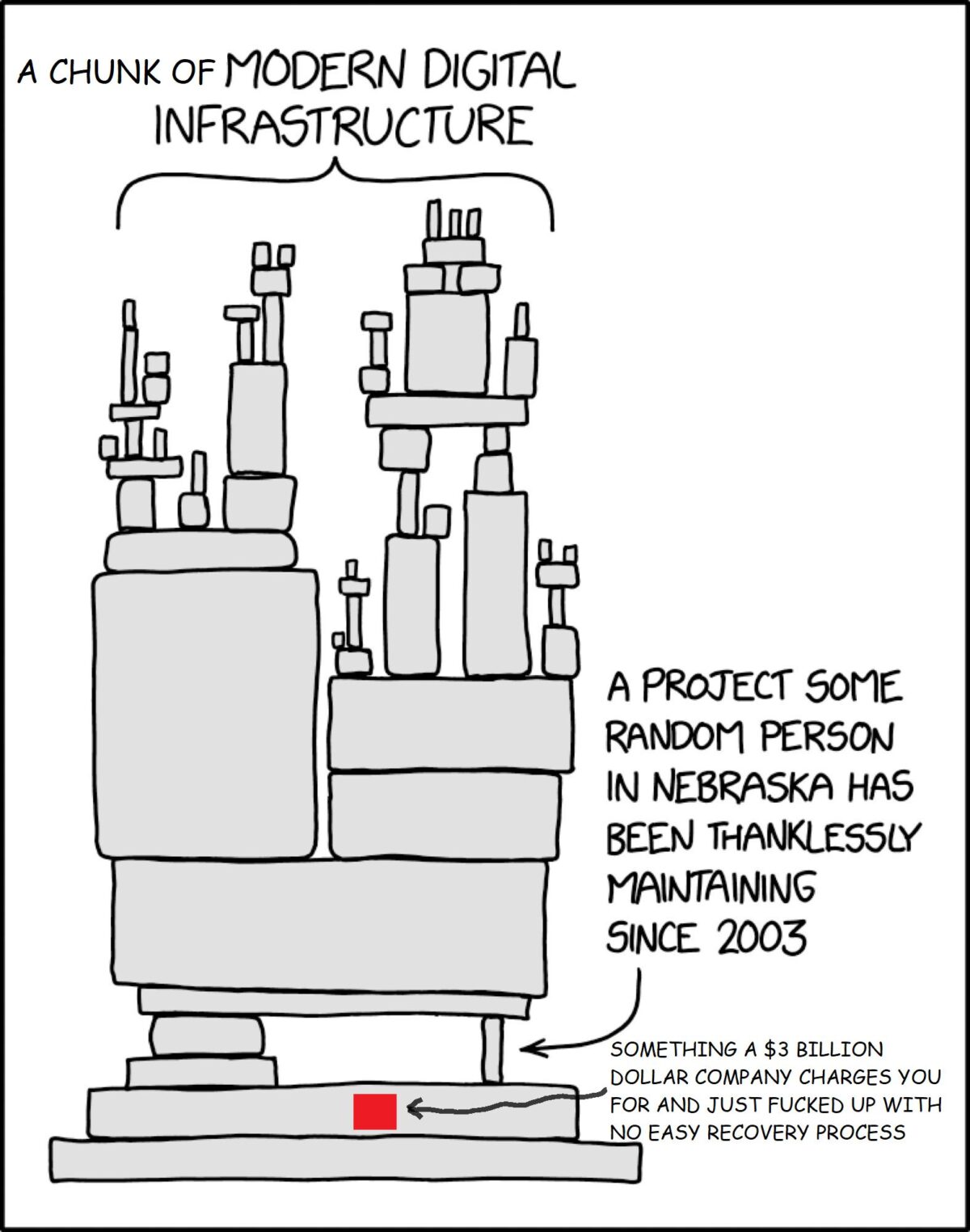

Also at The Verge, Emma Roth thinks it’s enough of a trend that website owners need to start thinking about backup – that is, failover – plans. Correctly, she says the widespread impact of these outages shows how concentrated infrastructure service provision has become. She cites Signal CEO Meredith Whittaker: the encrypted messaging service can’t find an alternative to using one of the three or four major cloud providers.

At Krebs on Security, Brian Krebs warns sites that managed to pivot their domains away from Cloudflare to keep themselves available during the outage need to examine their logs for signs of the attacks Cloudflare normally protects them from and put effort into fixing the common vulnerabilities they find. And then also: consider spreading the load so there isn’t a single point of failure. As I understand it, Netflix did this after the 2017 AWS outage.

For any single one of these giant providers, significant outages are not common. This was, Jon Brodin says at Ars Technica, Cloudflare’s worst outage since 2019. That one was due to a badly written firewall rule. But increasing size also brings increasing complexity, and, as these outages have also shown, even the largest network can be disrupted at scale by a very small mistake.

Elsewhere, a software engineer friend and I have been talking about “mean time between failures”, a measure normally applied to hard drives, servers, or other components. There, it’s much more easily measured – run a load of drives, time when they fail, take an average… With the Internet, so much depends on your individual setup. But beyond that: what counts as failure? My friend suggested setting thresholds based on impact: number of people, length of time, extent of cascading failures. Being able to quantify outages might help get a better sense of whether it’s a trend or a random cluster. The bottom line, though, is clear already: increasing centralization means that when outages occur they are further-reaching and disruptive in unpredictable ways. This trend can only continue, even if the outages themselves become rarer.

Most of us have no control over the infrastructure decisions sites and services make, or even any real way to know what they are. We can counter this to some extent by diversifying our own dependencies.

In the first decade or two of the Internet, we could always revert to older ways of doing things. Increasingly, this is impossible because either those older methods have been turned off or because technology has taken us places where the old ways didn’t go. We need to focus a lot more on making the new systems robust, or face a future as hostages.

Illustrations: Traffic jam in New York’s Herald Square, 1973 (via Wikimedia).

Also this week

– At the Plutopia podcast, we interview Jennifer Granick, the Surveillance and Cybersecurity counsel at the ACLU about the expansion of government and corporate surveillance and the increasing threat to civil liberties.

– At Skeptical Inquirer, I interview juggling mathematician Colin Wright about spreading enthusiasm for mathematics.

Wendy M. Grossman is an award-winning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.