This week, Amazon announced it would cripple a bunch of older Kindle models. Users will be able to go on reading the books that are already stored on their devices, but they won’t be able to add anything new, whether it’s borrowed, bought, or downloaded. The switch will be toggled on May 20, and applies to Kindle ereaders and Kindle Fires released in or before 2012. These devices had already been locked out of the store in 2022.

There is no benefit to consumers from doing this, even though Amazon is offering a substantial discount on newer devices for those who want to switch. There are presumably benefits to Amazon – namely, that it can update its store and other software without having to cater to older devices, plus sell an extra bunch of new ones. But overall, it’s a globally hostile move that creates a new pile of electronic waste composed of functioning hardware. One of these days, that’s going to be your car when some auto manufacturer decides that a “legacy” model isn’t worth supporting any more.

One option is to stop buying devices whose manufacturers demand that you surrender ownership in favor of their ongoing control. The other main possibility is to continue spreading right to repair laws so that a company like Amazon (or John Deere) wishing to shed itself of the responsibility of supporting older devices would be required to open them up to their users and the third-party ecosystem around them that would doubtless form. As the population of Internet of Things devices continues to grow around the world there will be much more of this – when we need much less. It’s absolutely maddening. Try a Kobo and the Gutenberg project.

It came out this week that language added in October 2025 to Microsoft’s terms of use says: “Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

This is like the disclaimers that psychics and mediums in the UK issue to prospective customers to prevent exploitation of the credulous. A software company, though…if it’s going to admit up front that the service it’s providing can’t be relied on, why force it on people in the first place?

Obviously, the point here is to cover the company’s ass in case of lawsuits. It fits right in with companies’ general refusal to accept liability for problems created by the software they make. I’m glad the company is warning people, but the better solution – if the company can’t bring itself to discontinue it altogether – would be to either remove Copilot and let people download it if they want it or leave it turned off by default, warning people up front instead of in terms of use that normally wouldn’t have been read. Instead, what we have to do is search for instructions to remove it and hope Microsoft hasn’t made following them impossible.

In the midst of a lot of science fictional hype-scares about “AI” along come some more real concerns. We’ll start “small”: The New York Times has done a study that finds that Google’s AI Overviews are right 90% of the time. Sounds not-so-bad, until you remember the Law of Truly Large Numbers. That “90%” success rate when the remaining 10% is applied to trillions means the overall result is, as Ryan Whitwam puts it in summarizing the story for Ars Technica, “hundreds of thousands of lies going out every minute of the day”. That’s automation for you. Used to be you needed an army of bots to disseminate misinformation at any sort of scale, and even then it was less effective since it wasn’t backed by an apparently authoritative name (see also Microsoft, above).

More alarming are the reports surfacing about generative AI’s effectiveness at aiding and amplifying online crime. In November, Google announced internal researchers had found hackers experimenting with a script that interacts with Gemini’s API to create just-in-time modifications. Last month, at Hacker Noon polymorphic viruses, but again, this appears to be a step up in sophistication and speed.

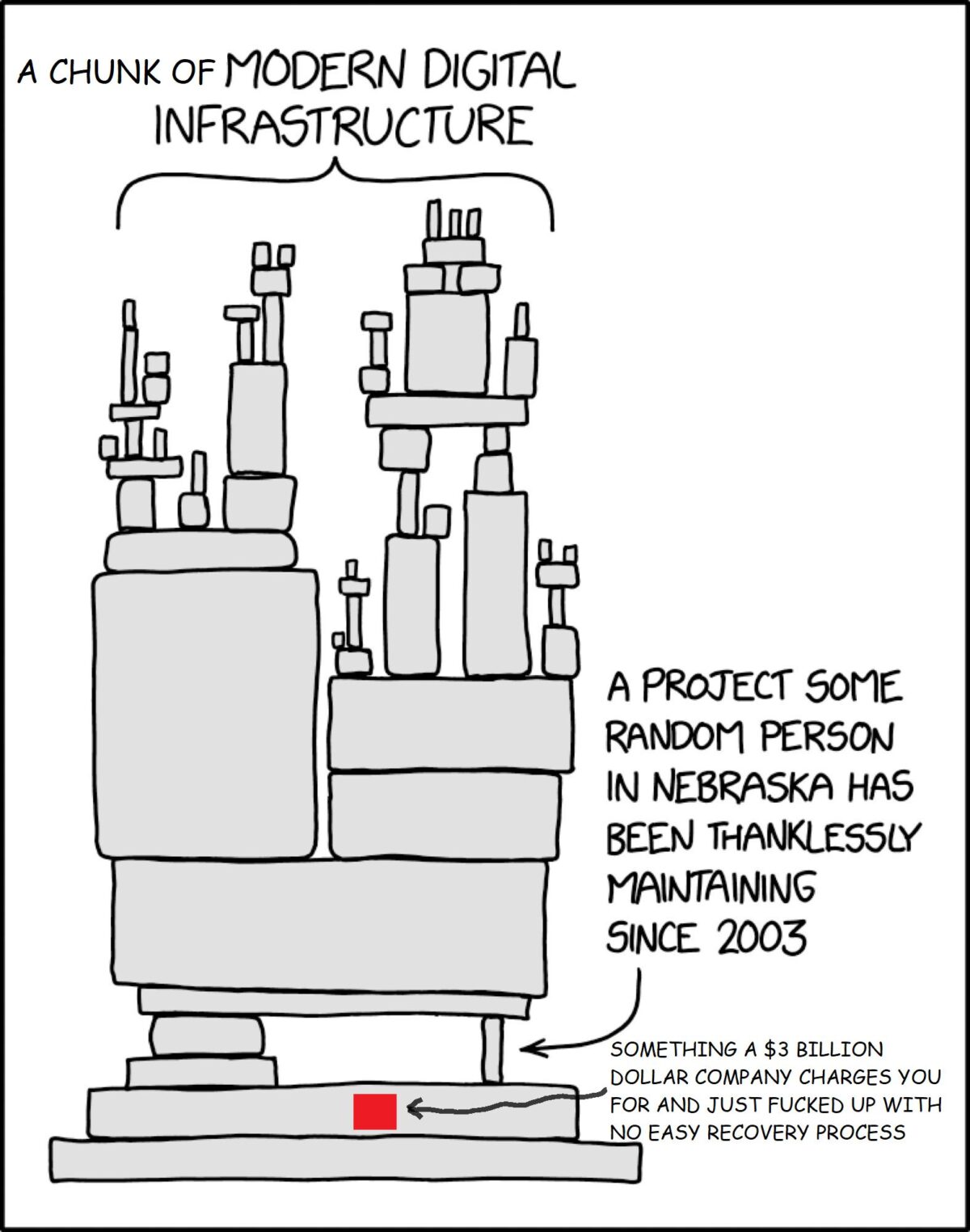

At the same time, Casey Newton reports at Platformer, Anthropic’s latest model is likely the first of many that can find and exploit vulnerabilities in software in “ways that far outpace the efforts of defenders”. The announcement, which appears to have been inadvertent, was quickly followed by another, formally launching the new model, Claude Mythos, and Project Glasswing. The latter, per Newton, gives more than 40 of the biggest technology companies early access so they can use the model to find and patch vulnerabilities in both their own systems and open source systems that underpin digital infrastructure.

Unlike most AI-related scare stories, this one is backed by people who are usually sensible, such as Alex Stamos, formerly chief security officer at Facebook and Yahoo, and other security practitioners. These warnings are not coming from AI company CEOs with a concept to sell .

So: on the one hand, (hopefully) better software; on the other, (potentially) newer, more dangerous attacks. Ugh. To restore calm, I recommend Terry Godier’s essay The Last Quiet Thing.

Illustrations: Bill Steele, who in 1970 wrote the environmental anthem Garbage!.

Wendy M. Grossman is an award-winning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.