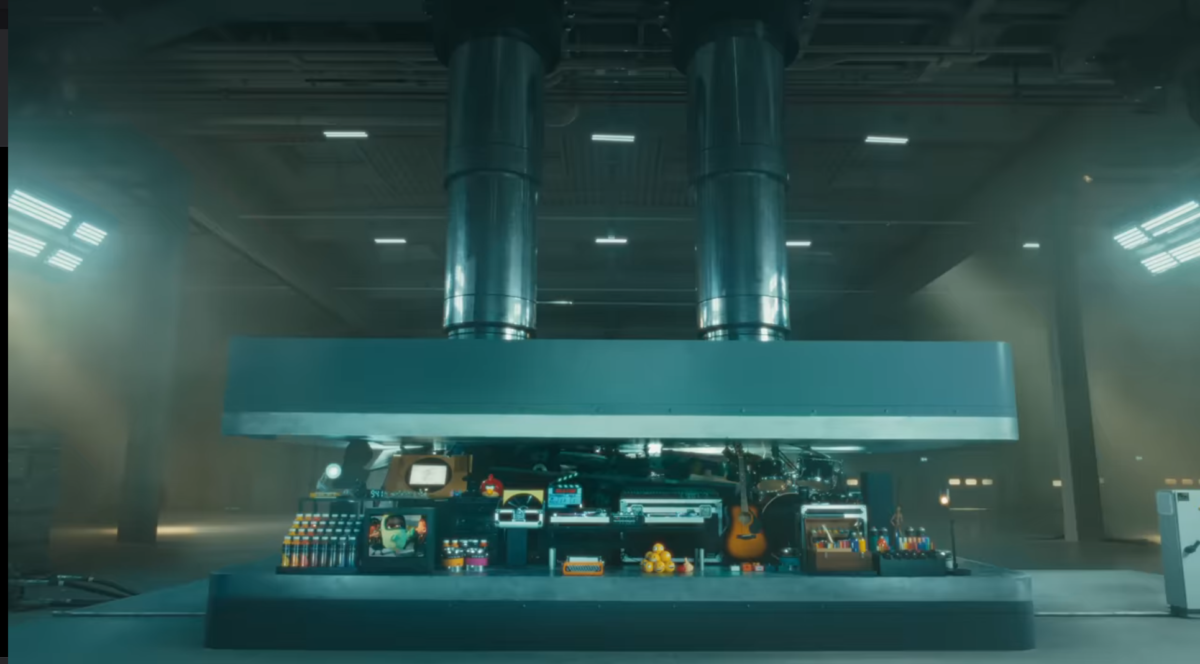

It was immediately tempting to view the absence of apostrophes on new street signs in a North Yorkshire town as a real-life example of computer systems crushing human culture. Then, near-simultaneously, Apple launched an ad (which it now regrets) showing just that process, raising the temptation even more. But no.

In fact, as Brandon Vigliarolo writes at The Register, not only is the removal of apostrophes in place names not new in the UK, but it also long precedes computers. The US Board on Geographic Names declared apostrophes unwanted as long ago as its founding year, 1890, apparently to avoid implying possession. This decision by the BGN, which has only made five exceptions in its history, was later embedded in the US’s Geographic Names Information System and British Standard 7666. When computers arrived to power databases, the practice carried on.

All that said, it’s my experience that the older British generation are more resentful of American-derived changes to their traditional language than they are of computer-driven alterations (one such neighbor complains about “sidewalk”). So campaigns to reinstate missing apostrophes seem likely to persist.

Blaming computers seemed like a coherent narrative, not least because new technology often disrupts social customs. Railways brought standardized time, and the desire to simplify things for computers led to the 2023 decision to eliminate leap seconds in 2035 (after 18 years of debate). Instead, the apostrophe apocalypse is a more ordinary story of central administrators preferencing their own convenience over local culture and custom (which may itself be contested). It still seems like people should be allowed to keep their street signs. I mean.

***

Of course language changes over time and usage. The character limits imposed by texting (and therefore exTwitter and other microblogging sites) brought us many abbreviations that are now commonplace in daily life, just as long before that the telegraph’s cost per word spawned its own compressed dialect. A new example popped up recently in Charles Arthur’s The Overspill.

Arthur highlighted an article at Level Up Coding/Medium by Fareed Khan that offered ways to distinguish between human-written and machine-generated text. It turns out that chatbots use distinctively different words than we do. Khan was able to generate a list of about 100 words that may indicate a chatbot has been at work, as well as a web app that can check a block of text or a file in one go. The word “delve” was at the top.

I had missed Khan’s source material, an earlier claim by YCombinator founder Paul Graham that “delve” used in an email pitch is a clear sign of ChatGPT-generated text. At the Guardian, Alex Hern suggests that an underlying cause may be the fact that much of the labeling necessary to train the large language models that power chatbots is carried out by badly paid people in the global South – including Africa, where “delve” is more commonly used than in Western countries.

At the Premium Times, Chiamaka Okafor argues that therefore identifying “delve” as a marker of “robotic text” penalizes African writers. “We are losing sight of an opportunity to rewrite the AI narratives that exclude people in the global majority,” she writes. A reminder: these chatbots are just math and statistics predicting the next word. They will always regress to the mean. And now they’ll penalize us for being different.

***

Just two years ago, researchers fretted that we were running out of “high-quality text” on which to train large language models. We’ve been seeing the results since, as sites hosting user-generated content strike deals with LLM owners, leading to contentious disputes between those owners and sites’ users, who feel betrayed and ripped off. Reddit began by charging for access to its API, then made a deal with Google to use its database of posts for training for an injection of cash that enabled it to go public. Yesterday, Reddit announced a similar deal with OpenAI – and the stock went up. In reality, these deals are asset-stripping a site that has consistently lost money for 18 years.

The latest site to sell its users’ content is the technical site Stack Overflow, Developers who offer mutual aid by answering each other’s questions are exactly the user base you would expect to be most offended by the news that the site’s owner, the investment group Prosus, which bought the site in 2021 for $1.8 billion, has made a deal giving OpenAI access to all its content. And so it proved: developers promptly began altering or removing their posts to protest the deal. Shortly thereafter, the site’s moderators began restoring those posts and suspending the users.

There’s no way this ends well; Internet history’s many such stories never have. The site’s original owners, who created the culture, are gone. The new ones don’t care what users *believe* their rights are if the terms and conditions grant an irrevocable license to everything they post. Inertia makes it hard to build a replacement; alienation thins out the old site. As someone posted to Twitter a few years ago, “On the Internet your home always leaves you.”

‘Twas ever thus. And so it will be until people stop taking the bait in the first place.

Illustrations: Apple’s canceled “crusher” ad.

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon.