In the early 2010s, after “nano” and before “AI”, 3D printing was the technology that was going to change everything. Then it seemed to go quiet except for guns.

“First we will gain control over the shape of physical things. Then we will gain new levels of control over their composition, the materials they’re made of. Finally, we will gain control over the behavior of physical things,” Hod Lipson and Melba Kurman wrote in their 2013 book, Fabricated. As far as I can tell, we’re still pretty much in the era of making physical things that could be made by traditional methods rather than weird new shapes that could *only* be produced by additive manufacturing. More than 15 years after a fellow technology conference attendee excitedly lectured me that 3D printing was going to change everything, its growth remains largely hidden from most of us.

Until this past week, when I attended an event awash in puzzle makers and discovered that it’s been a godsend to them for making not only prototypes but also small runs of copies or published designs, freeing them from having to find space and capital for the kind of quantities required by traditional production. It’s good to see a formerly hyped technology supporting clever and entertaining human invention.

Exploding egg, anyone?

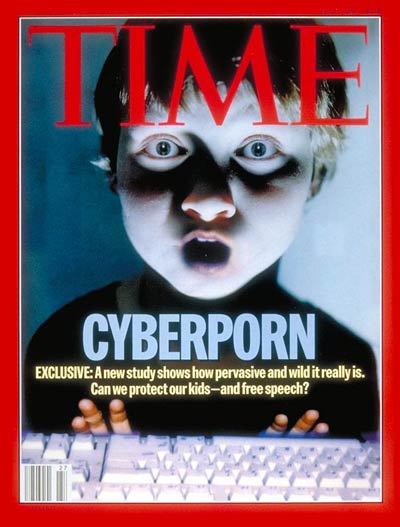

In one of the biggest fines in its history, the UK Information Commissioner’s Office has announced it is fining Reddit £14.5 million for failing to put in place an effective age verification mechanism to block under-13s from using the site under Reddit’s stated terms of service. The story is somewhat confused by timing: the fine is under data protection law and relates to the period before the arrival of the Online Safety Act, but the OSA’s requirement for age verification brought the changes that sparked the fine. Reddit says it will appeal.

In the UK terms and conditions Reddit announced in June 2025, the company says that “by using the services, you state that…you are at least 13 years old”. But Reddit didn’t require proof, and the ICO says that many under-13s use(d) the platform.

In July, when the Online Safety Act came into effect, Reddit added an age gate of 18 for “mature” content. Unlike many other social media sites that are just giant pools of content sorted by curation or algorithm, Reddit is a large set of distinct subReddits. Each of these communities has its own rules, social norms, and, most important, human moderators. Because of this, it’s comparatively easy to mark a particular subReddit as “for adults only”. After the July change, anyone in the UK wishing to access one of those subReddits was asked to submit a selfie or an image of a government-issued ID in order to prove their age.

The ICO’s findings state that Reddit failed to protect under-13s from accessing content that placed them at risk; that it processed under-13s’ data unlawfully (because they are too young to meaningfully consent); and that a simple statement is not a sufficient age verification mechanism (which is made clear in the OSA).

A Reddit spokesperson told the Guardian: “The ICO’s insistence that we collect more private information on every UK user is counterintuitive and at odds with our strong belief in our users’ online privacy and safety.”

I take their point; I’d rather skip the “mature” content than bear the privacy risk of uploading personal data to whatever third-party company Reddit is using for age verification. Last July, I decided I would just be a child. (Although: my Reddit account dates to 2015, so they could just do the math.)

Turns out, it may have been a wise decision. Reddit, saying it didn’t want to hold users’ personal data, chose the age verification provider Persona.

Persona deserves a look. Last week, Discord announced it would begin treating all users as teens until they’d been verified, also using Persona. The result as Ashley Belanger reports at Ars Technica was a user backlash. First, because last time Discord tried this, its now-former age verification provider’s pile of 70,000 users’ age check information was hacked.

Second, because The Rage reports that a group of security researchers found a Persona front end exposed to the open Internet on a US government server. On examination, that code shows that Persona performs 269 different verification checks and scours the Internet and government sources using your selfie and facial recognition. Discord has now announced it will delay introducing age verification – and won’t be using Persona after an apparently unsatisfactory trial in the UK last year.In a blog posting, Discord says that, like Reddit, it does not want to know its users’ identification details. It is adding more verification options.

If the world had already had a set of established trustworthy companies that specialized in age verification when the OSA came into effect, then it would make sense to turn to them to provide that service. But we aren’t in that situation. Instead, although providers have been working for more than a decade to build such systems, their deployment at scale is new.

Part of keeping children – and the rest of us – safe is protecting security and privacy – and child safety campaigners’ refusal to accept this has been an issue for decades. Creating new privacy risks doesn’t keep anyone safer – including children.

Illustrations: Six-panel early 1970s cartoon strip, “What the User Wanted”.

Wendy M. Grossman is an award-winning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.