UK prime minister Keir Starmer’s desire to bring in a UK digital ID is awake again with the announcement that the government plans to make the IDs available for “a handful of uses” before the next general election, due by 2029, . The requisite consultation closes May 5.

At Computer Weekly, Lis Evanstad adds a summary and detail about the consultation. Government by app! What could possibly go wrong?

Among other new information in the consultation: the age for being able to get a digital ID could drop below 18, perhaps even to issuance at birth. There might be a single unique identifier to enable linking data throughout government systems. Darren Jones, the prime minister’s chief secretary, talks smoother access to government services and the ability to share only the piece of data that’s needed for a specific purpose, but not about the risks of tying everything to a single identifier whose compromise can reverberate throughout your life.

In his Guardian piece, Stacey quotes Jones, who positions the digital ID as a way to improve fairness in access to government services, which he says accrue disproportionately to “pushy” people with time, patience, and energy.

This sounds good until you read Government Digital Service co-founder Tom Loosemore‘s blog, where he notes that creating that sort of stonewalling bureaucracy is often a deliberate strategy to manage demand. Loosemore argues that agentic AI will force an end to this strategy because agents will have unlimited patience and “reduce the cost to citizens of appeals, challenges, and calculations etc to near-zero”. Instead of having to manually dig through financial records, Loosemore finds in an experiment that an AI agent can scan the documents, find the information, and present only the data required to establish the citizen’s entitlement to benefits.

He believes AI agents will also bring a new level of transparency (and perhaps “pushiness”): “AI Agents will always dig out that 93 page PDF of guidance hidden.” Governments, he writes, will be forced to “clarify and tighten policies & processes, with all the painful political trade-offs therein”.

Or: will budget-protecting governments adopt their own agentic AIs to move and re-bury the stuff they don’t want applicants to find and recalibrate their requirements to make them resistant to automation? Seems just as likely, really.

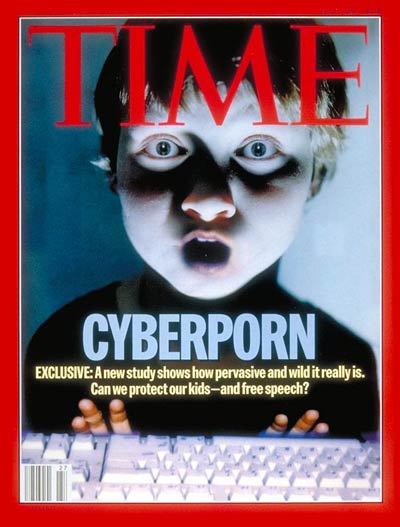

While all that was going on, significant votes took place on the future of access to online content. On Tuesday, Jennifer McKiernan reports at the BBC, MPs rejected the proposed social media for under-16s, which would have been added to the Children’s Wellbeing and Schools bill. Instead, the government is continuing to collect information from the consultation it launched on March 2, which closes on May 26.

Some MPs seem to have been persuaded by the argument – mooted by, among others, the National Society for the Prevention of Cruelty to Children – that banning children from social media will simply push them to find darker, less visible unregulated online spaces with less in the way of protection or moderation. The Commons did, however, support a government proposal to give the relevant ministers greater powers to restrict or ban children’s access to social media services and chatbots, limit their use of VPNs, and change the “age of digital consent”. The Children’s Wellbeing and Schools bill now goes back to the Lords for more discussion. .

As an unelected body, in recent years the House of Lords has often been a damper on hastily-passed legislation in response to political trends, but here they’re leading. The social media ban passed there. This week, as Dev Kundaliya reports at Computing. the Lords voted on two amendments, one to the Crime and Policing bill, the other to the Children’s Wellbeing and Schools bill. At the Online Safety Act Network blog, University of Essex professor Lorna Woods explains these in detail. The first would enable the government to amend the Online Safety Act to “minimize or mitigate the risks of harm to individuals” from illegal AI-generated content. The second would give the government latitude to change the age of consent.

Woods’ main point is the extreme power being given the government here to bypass Parliament, calling the clauses Henry VIII powers – that is, giving the government the power to change or repeal an Act of Parliament without consulting Parliament. The government’s official justification is to give the government greater flexibility to adapt on the fly to new technologies and online harms. Or to bar access to stuff it doesn’t like, presumably.

The Open Rights Group agrees, calling the plan powers to restrict the entire Internet. ORG also cites a March 0 open letter from 400 scientists and calls for a moratorium on age assurance to learn more about the technological hazards and social impact and for adopting alternative measures in the meantime, such as regulating algorithmic manipulation and providing parents with support.

At DefendDigitalMe, Jen Persson points out that the books already contain laws enabling considerable digital control of children; surveillance, she writes, is being “dressed as ‘safety'”. None of this, she writes, is compatible with children’s *rights*, which don’t seem to get much of a look-in.

Illustrations: Henry VIII, as painted by Hans Holbein the Younger (via Wikimedia.

Also this week: TechGrumps 3.38, The Bettification of Everything.

Wendy M. Grossman is an award-winning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.