In 2008, when the recording industry was successfully lobbying for an extension to the term of copyright to 95 years, I wrote about a spectacular unfairness that was affecting numerous folk and other musicians. Because of my own history and sometimes present with folk music, I am most familiar with this area of music, which aside from a few years in the 1960s has generally operated outside of the world of commercial music.

The unfairness was this: the remnants of a label that had recorded numerous long-serving and excellent musicians in the 1970s were squatting on those recordings and refusing to either rerelease them or return the rights. The result was both artistic frustration and deprivation of a sorely-needed source of revenue.

One of these musicians is the Scottish legend Dick Gaughan, who had a stroke in 2016 and was forced to give up performing. Gaughan, with help from friends, is taking action: a GoFundMe is raising the money to pay “serious lawyers” to get his rights back. Whether one loved his early music or not – and I regularly cite Gaughan as an important influence on what I play – barring him from benefiting from his own past work is just plain morally wrong. I hope he wins through; and I hope the case sets a precedent that frees other musicians’ trapped work. Copyright is supposed to help support creators, not imprison their work in a vault to no one’s benefit.

This has been the first week of requiring age verification for access to online content in the UK; the law came into effect on July 25. Reddit and Bluesky, as noted here two weeks ago, were first, but with Ofcom starting enforcement, many are following. Some examples: Spotify; X (exTwitter); Pornhub.

Two classes of problems are rapidly emerging: technical and political. On the technical side, so far it seems like every platform is choosing a different age verification provider. These AVPs are generally unfamiliar companies in a new market, and we are being asked to trust them with passports, driver’s licenses, credit cards, and selfies for age estimation. Anyone who uses multiple services will find themselves having to widely scatter this sensitive information. The security and privacy risks of this should be obvious. Still, Dan Malmo reports at the Guardian that AVPs are already processing five million age checks a day. It’s not clear yet if that’s a temporary burst of one-time token creation or a permanently growing artefact of repetitious added friction, like cookie banners.

X says it will examine users’ email addresses and contact books to help estimate ages. Some systems reportedly send referring page links, opening the way for the receiving AVP to store these and build profiles. Choosing a trustworthy VPN can be tricky, and these intermediaries are in a position to log what you do and exploit the results.

The BBC’s fact-checking service finds that a wide range of public interest content, including news about Ukraine and Gaza and Parliamentary debates, is being blocked on Reddit and X. Sex workers see adults being locked out of legal content.

Meanwhile, many are signing up for VPNs at pace, as predicted. The spike has led to rumors that the government is considering banning them. This seems unrealistic: many businesses rely on VPNs to secure connections for remote workers. But the idea is alarming; its logical extension is the war on general-purpose computation Cory Doctorow foresaw as a consequence of digital rights management in 2011. A terrible and destructive policy can serve multiple masters’ interests and is more likely to happen if it does.

On the political side, there are three camps. One wants the legislation repealed. Another wants to retain aspects many people agree on, such criminalizing cyberflashing and some other types of online abuse, and fix its flaws. The third thinks the OSA doesn’t go far enough, and they’re already saying they want it expanded to include all services, generative AI, and private messaging.

More than 466,000 people have signed a petition calling on the government to repeal the OSA. The government responded: thanks, but no. It will “work with Ofcom” to ensure enforcement will be “robust but proportionate”.

Concrete proposals for fixing the OSA’s worst flaws are rare, but a report from the Open Rights Group offers some; it advises an interoperable system that gives users choice and control over methods and providers. Age verification proponents often compare age-gating websites to ID checks in bars and shops, but those don’t require you to visit a separate shop the proprietor has chosen and hand over personal information. At Ctrl-Shift, Kirra Pendergast explains some of the risks.

Surrounding all that is noise. A US lawyer wants to sue Ofcom in a US federal court (huh?). Reform leader Nigel Farage has called for the Act’s repeal, which led technology secretary Peter Kyle to accuse him – and then anyone else who criticizes the act – of being on the side of sexual predators. Kyle told Mumsnet he apologizes to the generation of UK kids who were “let down” by being exposed to toxic online content because politicians failed to protect them all this time. “Never again…”

In other news, this government has lowered the voting age to 16.

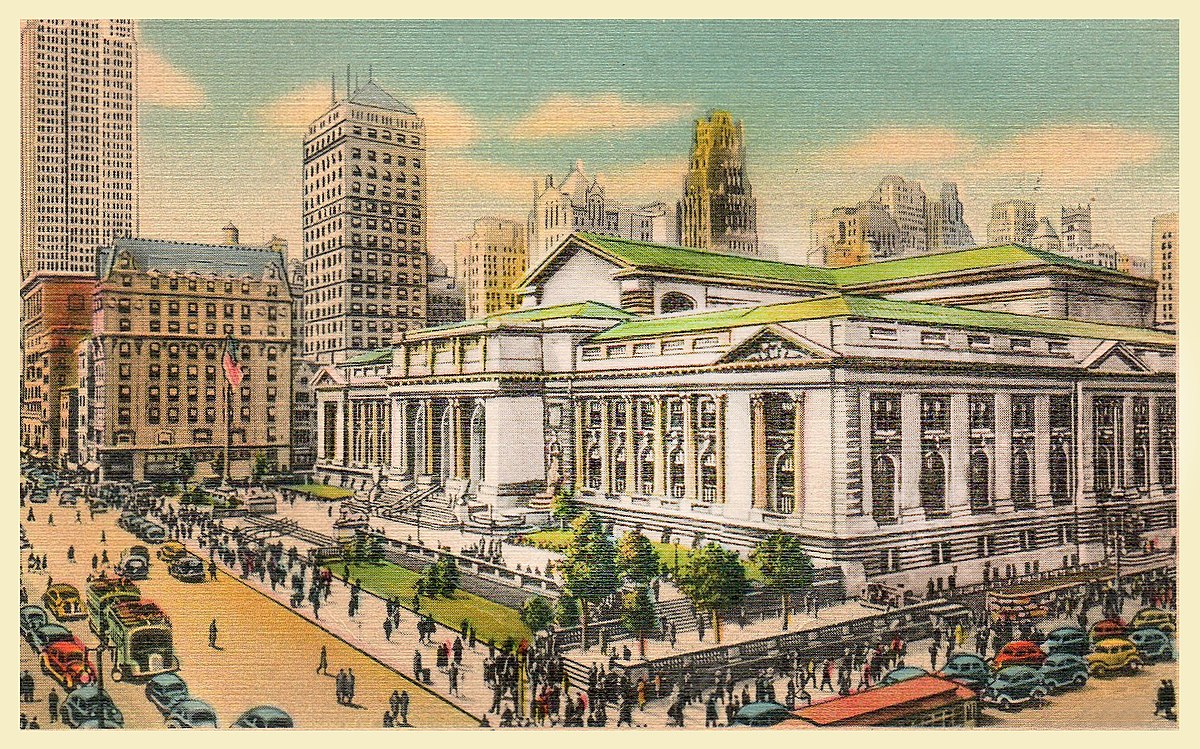

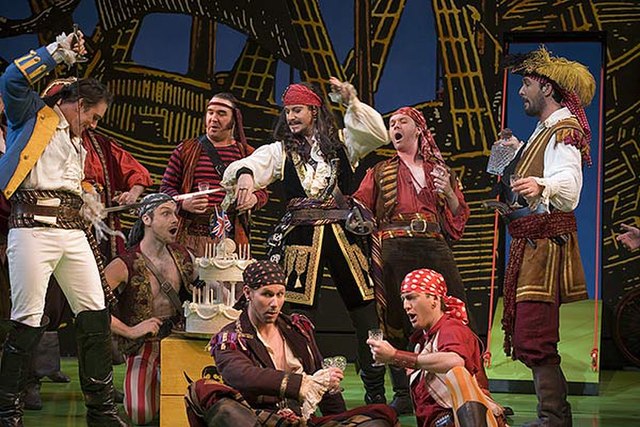

Illustrations: The back cover of Dick Gaughan’s out-of-print 1972 first album, No More Forever.

Wendy M. Grossman is an award-winnning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.