The debates over children’s use of social media, screens, and phones continue, exacerbated in the UK by ongoing Parliamentary scrutiny of the Children’s Wellbeing and Schools bill and continuing disgust over Grok‘s sexualized image generation. Robert Booth reports at the Guardian that the Center for Countering Digital Hate estimates that Grok AI generated 3 million sexualized images in under two weeks and that a third of them are still viewable on X. In that case, X and Grok appear to be a more general problem than children’s access.

We continue to need better evidence establishing causality or its absence. This week, researchers from the Bradford Centre for Health Data Science (led by Dan Lewer) and the University of Cambridge (led by Amy Orben) announced a six-week trial that will attempt to find the actual impact on teens of limiting – not ending – social media access. The BBC reports, that the trial will split 4,000 Bradford secondary school pupils into two groups, One will download an app hat turns off access to services like TikTok and Snapchat from 9pm to 7am and limits use at other times to a “daily budget”. The restrictions won’t include WhatsApp, which the researchers recognize is central to many family groups. The other half will go on using social media as before.

The researchers will compare the two groups by assessing their’ levels of anxiety, depression, sleep, bullying, and time spent with friends and family.

In earlier research, Orben developed a framework for data donation, which allows teens to understand their own use of social media. Another forthcoming study, Youth Perspectives on Social Media Harms: A Large-Scale Micro-Narrative Study, collects 901 first-person tales from 18- to 22-year-olds in the UK. From these Orben’s group derive four types of harm: harms from other people’s behavior, personal harmful behavior evoked by social media, harms related to the content they encounter, and harms related to platform features. In the first category they include bullying and scams; in the second, compulsive use and social comparison; in the third, graphic material; and in the fourth, algorithmic manipulation. They also note the study’s limitations. A longer-term or differently-timed study might show different effects – during the study period the 2024 US presidential election took place. The teens’ stories don’t establish causality. Finally, there may be other harms not captured in this study.

The most important element, however, is that they sought the perspective of young people themselves, who are to date rarely heard in these discussions.

As this research begins, at Techdirt Mike Masnick reports on two new finished papers also covering teens and social media. The first, Social Media Use and Well-Being Across Adolescent Development, published in JAMA Pediatrics, is a three-year study of 100,991 Australian adolescents to find whether well-being was associated with social media use. The researchers, from the University of South Australia, found a U-shaped curve: moderate social media use was associated with the best outcomes, while both the highest users and the non-users showed less well-being. Girls benefited increasingly from moderate social media use from mid-adolescence onwards, while in boys’ non-use became increasingly problematic, leading to worse outcomes than high use by their late teens.

The second, a study from the University of Manchester published in the Journal of Public Health, followed a group of 25,000 11- to 14-year-olds to find out whether the use of technology such as social media and gaming accurately predicted later mental health issues. The study found no evidence that heavier use of social media or gaming led to increased symptoms of anxiety or depression in the following year.

In his discussion of these two papers, Masnick argues that this research gives weight to his contention that the widespread claim that social media is inherently harmful is wrong.

In the UK and elsewhere, however, politicians are proceeding on the basis that social media *is* inevitably harmful. . This week, the government announced a consultation on children’s use of technology. The consultation seems, as Carly Page writes at The Register, geared toward increasing restrictions, Also this week, the House of Lords voted 261 to 150, defeating the government to add an amendment to the Children’s Wellbeing and Schools bill that would require social media services to add age verification to block under-16s from accessing them within a year. MPs will now have to vote to remove the amendment or it will become law, a backdoor preemption of the House of Commons’ prerogative to legislate.

UK prime minister Keir Starmer has been edging toward a social media ban for under-16s; now with added pressure from not only the Lords but also the Conservative Party leader, Kemi Badenoch, and 61 MPs sent an open letter supporting a ban like the one in Australia. Ofcom reports that 22% of children aged eight to 17 have a false user age of over 18 – but also that often it’s with their parents’ help. Would this be different under a national ban?

Starmer reportedly wants to delay deciding until evidence from Australia and, one presumes, from the consultation, is available. A sensible idea we hope is not doomed to failure.

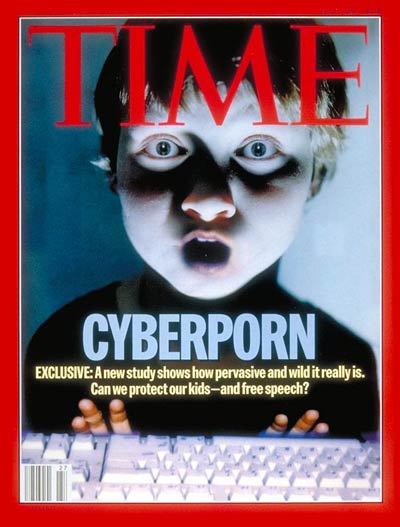

Illustrations: Time magazine’s 1995 “Cyberporn” cover, which raised early alarm about kids online. Based on a fraudulent study, it nonetheless influenced policy-making for some years.

Also this week:

At the Plutopia podcast, we interview Dave Evans on his work to combat misinformation.

Wendy M. Grossman is an award-winning journalist. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.