The-other-Wendy-Grossman-who-is-a-journalist came to my attention in the 1990s by writing a story about something Internettish while a student at Duke University. Eventually, I got email for her (which I duly forwarded) and, once, a transatlantic phone call from a very excited but misinformed PR person. She got married, changed her name, and faded out of my view.

By contrast, Naomi Klein‘s problem has only inflated over time. The “doppelganger” in her new book, Doppelganger: A Trip into the Mirror World, is “Other Naomi” – that is, the American author Naomi Wolf, whose career launched in 1990 with The Beauty Myth . “Other Naomi” has spiraled into conspiracy theories, anti-government paranoia, and wild unscientific theories. Klein is Canadian; her books include No Logo (1999) and The Shock Doctrine (2007). There is, as Klein acknowledges a lot of *seeming* overlap in that a keyword search might surface both.

I had them confused myself until Wolf’s 2019 appearance on BBC radio, when a historian dished out a live-on-air teardown of the basis of her latest book. This author’s nightmare is the inciting incident Klein believes turned Wolf from liberal feminist author into a right-wing media star. The publisher withdrew and pulped the book, and Wolf herself was globally mocked. What does a high-profile liberal who’s lost her platform do now?

When the covid pandemic came, Wolf embraced every available mad theory and her liberal past made her a darling of the extremist right wing media. Increasingly obsessed with following Wolf’s exploits, which often popped up in her online mentions, Klein discovered that social media algorithms were exacerbating the confusion. She began to silence herself, fearing that any response she made would increase the algorithms’ tendency to conflate Naomis. She also abandoned an article deploring Bill Gates’s stance protecting corporate patents instead of spreading vaccines as widely as possible (The Gates Foundation later changed its position.)

Klein tells this story honestly, admitting to becoming addictively obsessed, promising to stop, then “relapsing” the first time she was alone in her car.

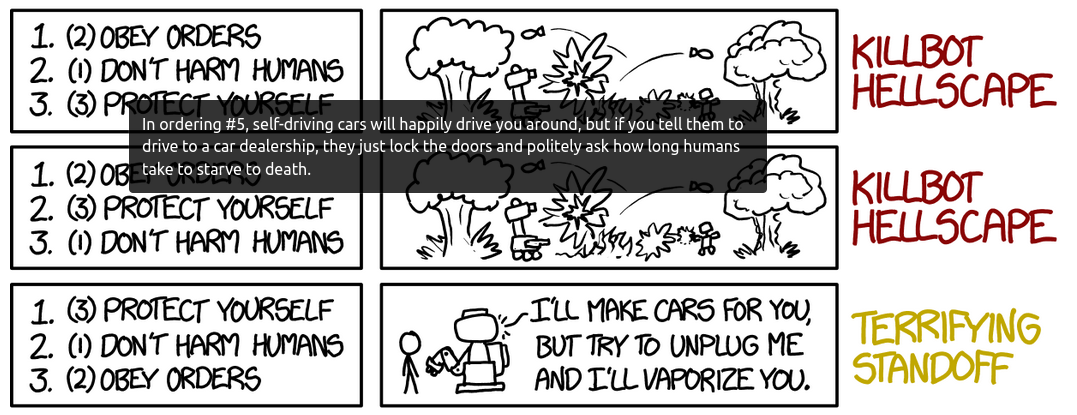

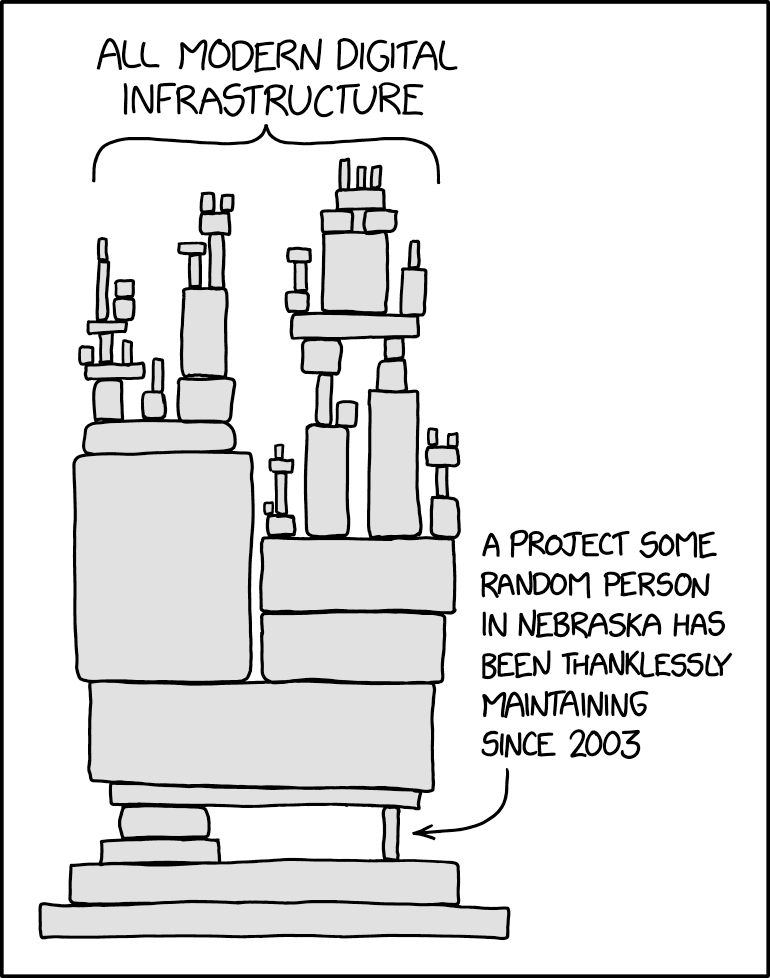

The appearance of overlap through keyword similarities is not limited to the two Naomis, as Klein finds on further investigation. YouTube stars like Steve Bannon, who founded Breitbart and served as Donald Trump’s chief strategist during his first months in the White House, wrote this playbook: seize on under-acknowledged legitimate grievances, turn them into right wing talking points, and recruit the previously-ignored victims as allies and supporters. The lab leak hypothesis, the advice being given by scientific authorities, why shopping malls were open when schools were closed, the profiteering (she correctly calls out the UK), the behavior of corporate pharma – all of these were and are valid topics for investigation, discussion, and debate. Their twisted adoption as right-wing causes made many on the side of public health harden their stance to avoid sounding like “one of them”. The result: words lost their meaning and their power.

These are problems no amount of content moderation or online safety can solve. And even if it could, is it right to ask underpaid workers in what Klein terms the “Shadowlands” to clean up our society’s nasty side so we don’t have to see it?

Klein begins with a single doppelganger, then expands into psychology, movies, TV, and other fiction, and ends by navigating expanding circles; the extreme right-wing media’s “Mirror World” is our society’s Mr Hyde. As she warns, those who live in what a friend termed “my blue bubble” may never hear about the media and commentators she investigates. After Wolf’s disgrace on the BBC, she “disappeared”, in reality going on to develop a much bigger platform in the Mirror World. But “they” know and watch us, and use our blind spots to expand their reach and recruit new and unexpected sectors of the population. Klein writes that she encounters many people who’ve “lost” a family member to the Mirror World.

This was the ground explored in 2015 by the filmmaker Jen Senko, who found the same thing when researching her documentary The Brainwashing of My Dad. Senko’s exploration leads from the 1960s John Birch Society through to Rush Limbaugh and Roger Ailes’s intentional formation of Fox News. Klein here is telling the next stage of that same story. Mirror World is not an accident of technology; it was a plan, then technology came along and helped build it further in new directions.

As Klein searches for an explanation for what she calls “diagonalism” – the phenomenon that sees a former Obama voter now vote for Trump, or a former liberal feminist shrug at the Dobbs decision – she finds it possible to admire the Mirror World’s inhabitants for one characteristic: “they still believe in the idea of changing reality”.

This is the heart of much of the alienation I see in some friends: those who want structural change say today’s centrist left wing favors the status quo, while those who are more profoundly disaffected dismiss the Bidens and Clintons as almost as corrupt as Trump. The pandemic increased their discontent; it did not take long for early optimistic hopes of “build back better” to fade into “I want my normal”.

Klein ends with hope. As both the US and UK wind toward the next presidential/general election, it’s in scarce supply.

Illustrations: Charlie Chaplin as one of his doppelgangers in The Great Dictator (1940).

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon