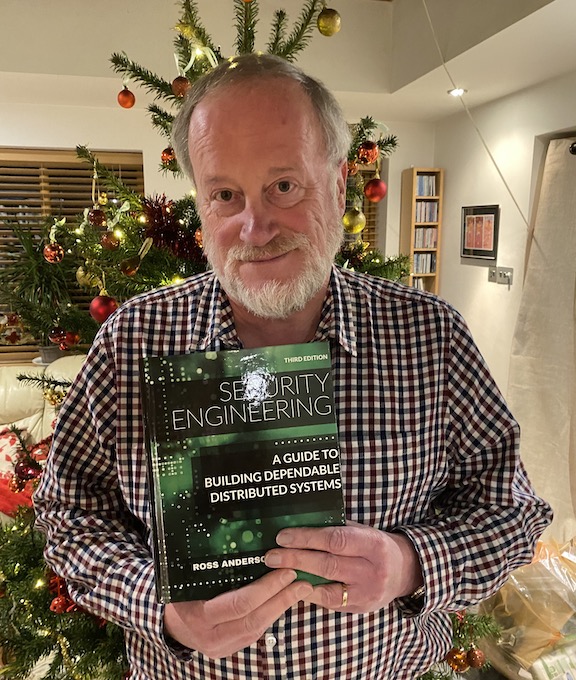

RIP Ross J. Anderson, who died on March 28, at 67 and leaves behind a smoking giant crater in the fields of security engineering and digital rights activism. For the former, he was a professor of security engineering at Cambridge University and Edinburgh University, a Fellow of the Royal Society, and a recipient of the BCS Lovelace medal. His giant textbook Security Engineering is a classic. In digital rights activism, he founded the Foundation for Information Policy Research (see also tenth anniversary and 15th anniversary) and the UK Crypto mailing list, and, understanding that the important technology laws were being made at the EU level, he pushed for the formation of European Digital Rights to act as an umbrella organization for the national digital rights organizations springing up in many countries. He also was one of the pioneers in security economics, and founded the annual Workshop on the Economics of Information Security, convening on April 8 for the 23rd time.

One reason Anderson was so effective in the area of digital rights is that he had the ability to look forward and see the next challenge while it was still forming. Even more important, he had an extraordinary ability to explain complex concepts in an understandable manner. You can experience this for yourself at the YouTube channel where he posted a series of lectures on security engineering or by reading any of the massive list of papers available at his home page.

He had a passionate and deep-seated sense of injustice. In the 1980s and 1990s, when customers complained about phantom ATM withdrawals and the banks tried to claim their software was infallible, he not only conducted a detailed study but adopted fraud in payment systems as an ongoing research interest.

He was a crucial figure in the fight over encryption policy, opposing key escrow in the 1990s and “lawful access” in the 2020s, for the same reasons: the laws of mathematics say that there is no such thing as a hole only good guys can exploit. His name is on many key research papers in this area.

In the days since his death, numerous former students and activists have come forward with stories of his generosity and wit, his eternal curiosity to learn new things, and the breadth and depth of his knowledge. And also: the forthright manner that made him cantankerous.

I think I first encountered Ross at the 1990s Scrambling for Safety events organized by Privacy International. He was slow to trust journalists, shall we say, and it was ten years before I felt he’d accepted me. The turning point was a conference where we both arrived at the lunch counter at the same time. I happened to be out of cash, and he bought me a sandwich.

Privately, in those early days I sometimes referred to him as the “mad cryptographer” because interviews with him often led to what seemed like off-the-wall digressions. One such led to an improbable story about the US Embassy in Moscow being targeted with microwaves in an attempt at espionage. This, I found later, was true. Still, I felt best not to quote it in the interests of getting people to listen to what he was saying about crypto policy.

My favorite Ross memory, though, is this: one night shortly before Christmas maybe ten years ago – by this time we were friends – when I interviewed him over Skype for yet another piece. It was late in Britain, and I’m not sure he was fully sober. Before he would talk about security, knowing of my interest in folk music, he insisted on playing several tunes on the Scottish chamber pipes. He played well. Pipe music was another of his consuming interests, and he brought to it as much intensity and scholarship as he did to all his other passions.

Ross J. Anderson, b. September 15, 1956, d. March 28, 2024.