This week saw a small gathering to celebrate the 25th anniversary (more or less) of the Foundation for Information Policy Research, a think tank led by Cambridge and Edinburgh University professor Ross Anderson. FIPR’s main purpose is to produce tools and information that campaigners for digital rights can use. Obdisclosure: I am a member of its advisory council.

What, Anderson asked those assembled, should FIPR be thinking about for the next five years?

When my turn came, I said something about the burnout that comes to many campaigners after years of fighting the same fights. Digital rights organizations – Open Rights Group, EFF, Privacy International, to name three – find themselves trying to explain the same realities of math and technology decade after decade. Small wonder so many burn out eventually. The technology around the debates about copyright, encryption, and data protection has changed over the years, but in general the fundamental issues have not.

In part, this is because what people want from technology doesn’t change much. A tangential example of this presented itself this week, when I read the following in the New York Times, written by Peter C Baker about the “Beatles'” new mash-up recording:

“So while the current legacy-I.P. production boom is focused on fictional characters, there’s no reason to think it won’t, in the future, take the form of beloved real-life entertainers being endlessly re-presented to us with help from new tools. There has always been money in taking known cash cows — the Beatles prominent among them — and sprucing them up for new media or new sensibilities: new mixes, remasters, deluxe editions. But the story embedded in “Now and Then” isn’t “here’s a new way of hearing an existing Beatles recording” or “here’s something the Beatles made together that we’ve never heard before.” It is Lennon’s ideas from 45 years ago and Harrison’s from 30 and McCartney and Starr’s from the present, all welded together into an officially certified New Track from the Fab Four.”

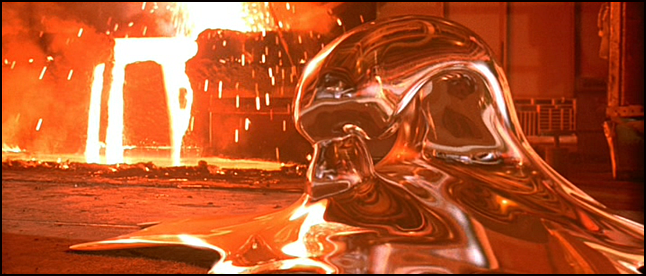

I vividly remembered this particular vision of the future because just a few days earlier I’d had occasion to look it up – a March 1992 interview for Personal Computer World with the ILM animator Steve Williams, who the year before had led the team that produced the liquid metal man for the movie Terminator 2. Williams imagined CGI would become pervasive (as it has):

“…computer animation blends invisibly with live action to create an effect that has no counterpart in the real world. Williams sees a future in which directors can mix and match actors’ body parts at will. We could, he predicts, see footage of dead presidents giving speeches, films starring dead or retired actors, even wholly digital actors. The arguments recently seen over musicians who lip-synch to recordings during supposedly ‘live’ concerts are likely to be repeated over such movie effects.”

Williams’ latest work at the time was on Death Becomes Her. Among his calmer predictions was that as CGI became increasingly sophisticated the boundary between computer-generated characters and enhancements would become invisible. Thirty years on, the big excitement recently has been Harrison Ford’s deaging for Indiana Jones and the Dial of Destiny. That used CGI, AI, and other tools to digitally swap in his face from 1980s footage.

Side note: in talking about the Ford work to Wired, ILM supervisor Andrew Whitehurst, exactly like Williams in 1992, called the new technology “another pencil”.

Williams also predicted endless legal fights over copyright and other rights. That at least was spot-on; AI and the perpetual reuse of retained footage without further payment is part of what the recent SAG-AFTRA strikes were about.

Yet, the problem here isn’t really technology; it’s the incentives. The businessfolk of Hollywood’s eternal desire is to guarantee their return on investment, and they think recycling old successes is the safest way to do that. Closer to digital rights, law enforcement always wants greater access to private communications; the frustration is that incoming generations of politicians don’t understand the laws of mathematics any better than their predecessors in the 1990s.

Many of the speakers focused on the issue of getting government to listen to and understand the limits of technology. Increasingly, though, a new problem is that, as Bruce Schneier writes in his latest book, The Hacker’s Mind, everyone has learned to think like hackers and subvert the systems they’re supposed to protect. The Silicon Valley mantra of “ask forgiveness, not permission” has become pervasive, whether it’s a technology platform deciding to collect masses of data about us or a police force deciding to stick a live facial recognition pilot next to Oxford Circus tube station. Except no one asks for forgiveness either.

Five years ago, at FIPR’s 20th anniversary, when GDPR is new, Anderson predicted (correctly) that the battles over encryption would move to device access. Today, it’s less clear what’s next. Facial recognition represents a step change; it overrides consent and embeds distrust in our public infrastructure.

If I were to predict the battles of the next five years, I’d look at the technologies being deployed around European and US borders to surveil migrants. Migrants make easy targets for this type of experimentatioon because they can’t afford to protest and can’t vote. “Automated suspicion,” Euronews.next calls it. That habit of mind is danagerous.

Illustrations: The liquid metal man in Terminator 2 reconstituting itself.

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon

One thought on “The good fight”

Comments are closed.