If you were going to carve up today’s technology giants to create a more competitive landscape, how would you do it? This time the game’s for real. In August, US District Judge Amit Mehta ruled that, “Google is a monopolist and has acted as one to maintain its monopoly.” A few weeks ago, the Department of Justice filed preliminary proposals (PDF) for remedies. These may change before the parties reassemble in court next April.

Antitrust law traditionally aimed to ensure competition in order to create both a healthy business ecosystem and better serve consumers. “Free” – that is, pay-with-data – online services have been resistant to antitrust analysis through decades of focusing on lowered prices to judge success.

It’s always tempting to think of breaking monopolists up into business units. For example, a key moment in Meta’s march to huge was its purchase of WhatsApp (2014) and Instagram (2012), turning baby competitors into giant subsidiaries. In the EU, that permission was based on a promise, which Meta later broke, not to merge the three companies’ databases. Separating them back out again to create three giant privacy-invading behemoths in place of one is more like the sorceror’s apprentice than a win.

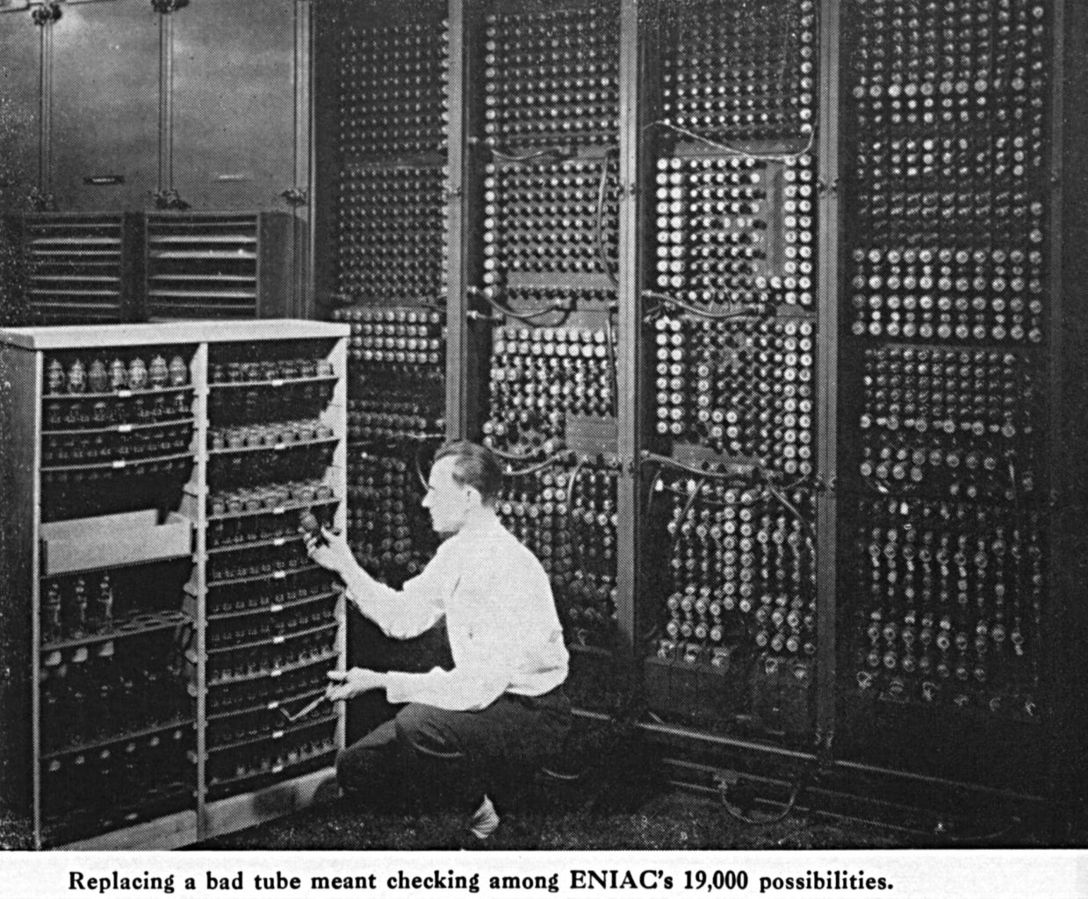

In the late 1990s case against Microsoft, which ended in settlement, many speculated about breaking it up into Baby Bills. The key question: create clones or divide up the Windows and office software?

In 2013, at ComputerWorld Gregg Keizer asked experts to imagine the post-Microsoft-breakup world. Maybe the office software company ported its products onto the iPad. Maybe the clones eventually diverged and one would have dominated search. Keizer’s experts generally agree, though, that the antitrust suit itself had its effects, slowing the company’s forward progress by making it fear provoking further suits, like IBM before it.

In Google’s case, the key turning point was likely the 2007-2008 acquisition of online advertising pioneer DoubleClick. Google was then ten years old and had been a public company for almost four years. At its IPO Wall Street pundits were dismissive, saying it had no customer lock-in and no business model.

Reading Google’s 2008 annual report is an exercise in nostalgia. Amid an explanation of contextual advertising, Google says it has never spent much on marketing because the quality of its products generated word of mouth momentum worldwide. This was all true – then.

At the time, privacy advocates opposed the DoubleClick merger. Both FTC and EU regulators raised concerns, but let it go ahead to become the heart of the advertising business Susan Wojcicki and Sheryl Sandberg built for Google. Despite growing revenues from its cloud services business, most of Google’s revenues still come from advertising.

Since then, Mehta ruled, Google cemented its dominance by paying companies like Apple, Samsung, and Verizon to make its search engine the default on the devices they make and/or sell. Further, Google’s dominance – 90% of search – allows it to charge premium rates for search ads, which in turn enhances its financial advantage. OK, one of those complaining competitors is Microsoft, but others are relative minnows like 15-year-old DuckDuckGo, which competes on privacy, buys TV ads, and hasn’t cracked 1% of the search market. Even Microsoft’s Bing, at number two, has less than 4%. Google can insist that it’s just that good, but complaints that its search results are degrading are everywhere.

Three aspects of the DoJ’s proposals seized the most attention: forcing Google to divest itself of the Chrome browser; second, if that’s not enough, to divest the Android mobile operating system; and third a block on paying other companies to make Google search the default. The latter risks crippling Mozilla and Firefox, and would dent Apple’s revenues, but not really harm Google. Saving $26.3 billion (2021 number) can’t be *all* bad.

At The Verge, Lauren Feiner summarizes the DoJ’s proposals. At the Guardian, Dan Milmo notes that the DoJ also wants Google to be barred from buying or investing in search rivals, query-based AI, or adtech – no more DoubleClicks.

At Google’s blog, chief legal officer Kent Walker calls the proposals “a radical interventionist agenda”. He adds that it would chill Google’s investment in AI like this is a bad thing, when – hello! – a goal is ensuring a competitive market in future technologies. (It could even be a good thing generally.)

Finally, Walker claims divesting Chrome and/or Android would endanger users’ security and privacy and frets that it would expose Americans’ personal search queries to “unknown foreign and domestic companies”. Adapting a line from the 1980 movie Hopscotch, “You mean, Google’s methods of tracking are more humane than the others?” While relaying DuckDuckGo’s senior vice-president’s similar reaction, Ars Technica’s Ashley Belanger dubs the proposals “Google’s nightmare”.

At Techdirt, Mike Masnick favors DuckDuckGo’s idea of forcing Google to provide access to its search results via an API so competitors can build services on top, as his company does with Bing. Masnick wants users to become custodians and exploiters of their own search histories. Finally, at Pluralistic, Cory Doctorow likes spinning out – not selling – Chrome. End adtech surveillance, he writes, don’t democratize it.

It’s too early to know what the DoJ will finally recommend. If nothing is done, however, Google will be too rich to fear future lawsuits.

Illustration: Mickey Mouse as the sorceror’s apprentice in (1940).

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.