The recent past is a foreign country; they view the world differently there.

At last week’s We Robot conference on technology, policy, and law, the indefatigably detail-oriented Sue Glueck was the first to call out a reference to the propagation of transparency and accountability by the “US and its allies” as newly out of date. From where we were sitting in Windsor, Ontario, its conjoined fraternal twin, Detroit, Michigan, was clearly visible just across the river. But: recent events.

As Ottawa law professor Teresa Scassa put it, “Before our very ugly breakup with the United States…” Citing, she Anu Bradford, she went on, “Canada was trying to straddle these two [US and EU] digital empires.” Canada’s human rights and privacy traditions seem closer to those of the EU, even though shared geography means the US and Canada are superficially more similar.

We’ve all long accepted that the “technology is neutral” claim of the 1990s is nonsense – see, just this week, Luke O’Brien’s study at Mother Jones of the far-right origins of the web-scraping facial recognition company Clearview AI. The paper Glueck called out, co-authored in 2024 by Woody Hartzog, wants US lawmakers to take a tougher approach to regulating AI and ban entirely some systems that are fundamentally unfair. Facial recognition, for example, is known to be inaccurate and biased, but improving its accuracy raises new dangers of targeting and weaponization, a reality Cynthia Khoo called “predatory inclusion”. If he were writing this paper now, Hartzog said, he would acknowledge that it’s become clear that some governments, not just Silicon Valley, see AI as a tool to destroy institutions. I don’t *think* he was looking at the American flags across the water.

Later, Khoo pointed out her paper on current negotiations between the US and Canada to develop a bilateral law enforcement data-sharing agreement under the US CLOUD Act. The result could allow US police to surveil Canadians at home, undermining the country’s constitutional human rights and privacy laws.

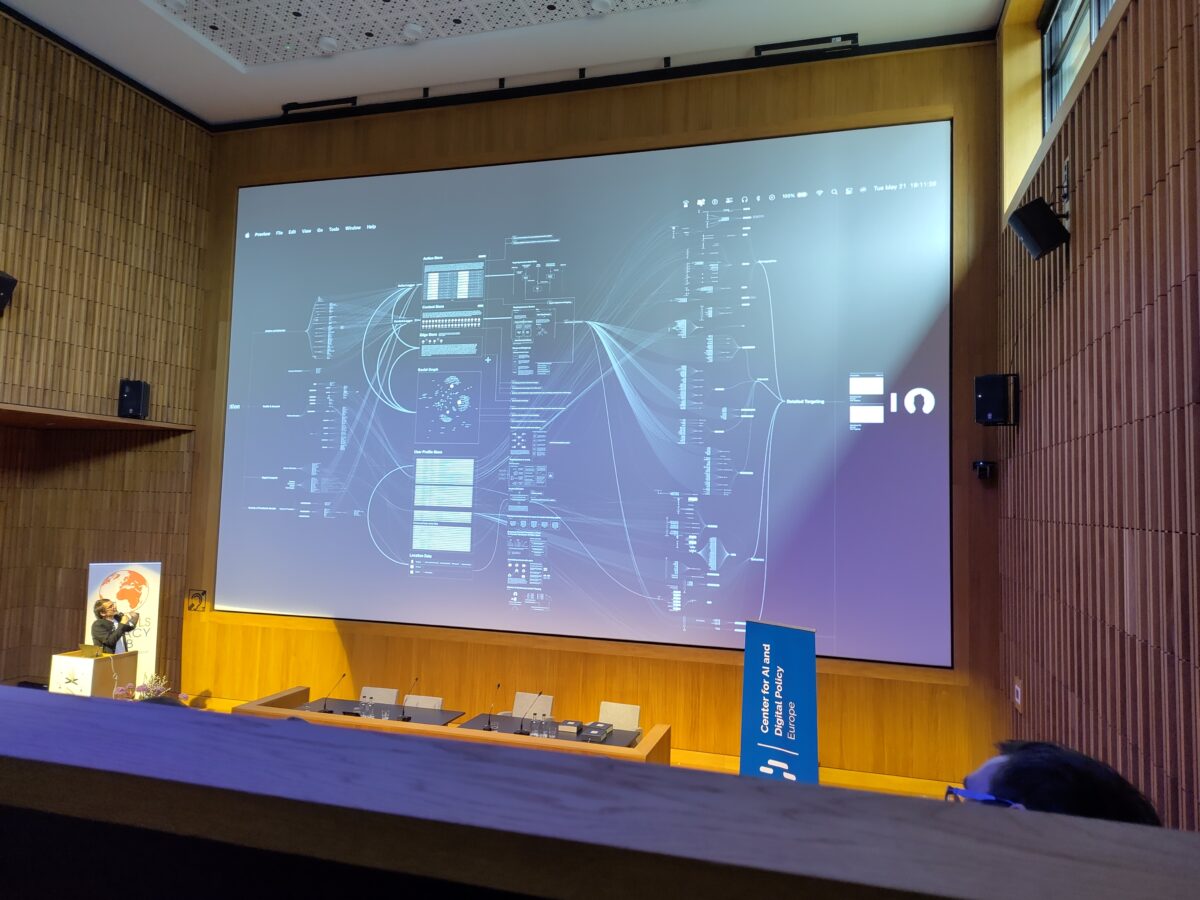

In her paper, Clare Huntington proposed deriving approaches to human relationships with robots from family law. It can, she argued, provide analogies to harms such as emotional abuse, isolation, addiction, invasion of privacy, and algorithmic discrimination. In response, Kate Darling, who has long studied human responses to robots, raised an additional factor exacerbating the power imbalance in such cases: companies, “because people think they’re talking to a chatbot when they’re really talking to a company.” That extreme power imbalance is what matters when trying to mitigate risk (see also Sarah Wynn-Williams’ recent book and Congressional testimony on Facebook’s use of data to target vulnerable teens).

In many cases, however, we are not agents deciding to have relationships with robots but what AJung Moon called “incops”, or “incidentally co-present”. In the case of the Estonian Starship delivery robots you can find in cities from San Francisco to Milton Keynes, that broad category includes human drivers, pedestrians, and cyclists who share their spaces. In a study, Adeline Schneider found that white men tended to be more concerned about damage to the robot, where others worried more about communication, the data they captured, safety, and security. Delivery robots are, however, typically designed with only direct users in mind, not others who may have to interact with it.

These are all social problems, not technological ones, as conference chair Kristen Thomasen observed. Carys Craig later modified it: technology “has compounded the problems”.

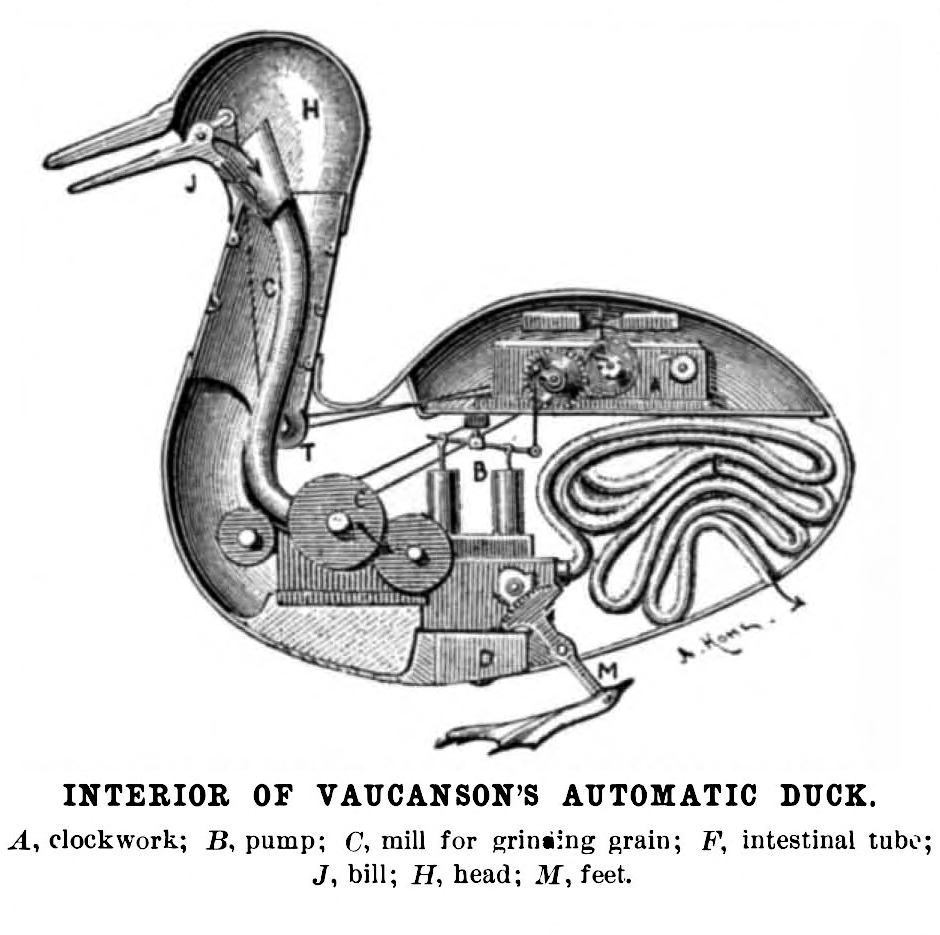

This is the perennial We Robot question: what makes robots special? What qualities require new laws? Just as we asked about the Internet in 1995, when are robots just new tools for old rope, and when do they bring entirely new problems? In addition, who is responsible in such cases? This was asked in a discussion of Beatrice Panattoni‘s paper on Italian proposals to impose harsher penalties for crime committed with AI or facilitated by robots. The pre-conference workshop raised the same question. We already know the answer: everyone will try to blame someone or everyone else. But in formulating a legal repsonse, will we tinker around the edges or fundamentally question the criminal justice system? Andrew Selbst helpfully summed up: “A law focusing on specific harms impedes a structural view.”

At We Robot 2012, it was novel to push lawyers and engineers to think jointly about policy and robots. Now, as more disciplines join the conversation, familiar Internet problems surface in new forms. Human-robot interaction is a four-dimensional version of human-computer interaction; I got flashbacks to old hacking debates when Elizabeth Joh wondered in response to Panattoni’s paper if transforming a robot into a criminal should be punished; and a discussion of the use of images of medicalized children for decades in fundraising invoked publicity rights and tricky issues of consent.

Also consent-related, lawyers are starting to use generative AI to draft contracts, a step that Katie Szilagyi and Marina Pavlović suggested further diminishes the bargaining power already lost to “clickwrap”. Automation may remove our remaining ability to object from more specialized circumstances than the terms and conditions imposed on us by sites and services. Consent traditionally depends on a now-absent “meeting of minds”.

The arc of We Robot began with enthusiasm for robots, which waned as big data and generative AI became players. Now, robots/AI are appearing as something being done to us.

Illustrations: Detroit, seen across the river from Windsor, Ontario.

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon or Bluesky.