The Promise and the Peril of CRISPR

Edited by Neal Baer

Johns Hopkins University Press

ISBN: 978-1-4214493-02

It’s an interesting question: why are there so many articles in which eminent scientists fear an artificial general superintelligence (which is pure fantasy for the foreseeable future)…and so few that are alarmed by human gene editing tools, which are already arriving? The pre-birth genetic selection in the 1997 movie Gattaca is closer to reality than an AGI that decides to kill us all and turn us into paperclips.

In The Promise and the Peril of CRISPR, Neal Baer collects a series of essays considering the ethical dilemmas posed by a technology that could be used to eliminate whole classes of disease and disabilities. The promise is important: gene editing offers the possibility of curing chronic, painful, debilitating congenital conditions. But for everything, there may be a price. A recent episode of HBO Max’s TV series The Pitt showed the pain that accompanies sickle cell anemia. But that same condition confers protection against malaria, which was an evolutionary advantage in some parts of the world. There may be many more such tradeoffs whose benefits are unknown to us.

Baer started with a medical degree, but quickly found work as a TV writer. He is best known for his work on the first seven years of ER and seasons two through 12 of Law and Order: Special Victims Unit. In his medical career as an academic pediatrician, he writes extensively and works with many health care-related organizations.

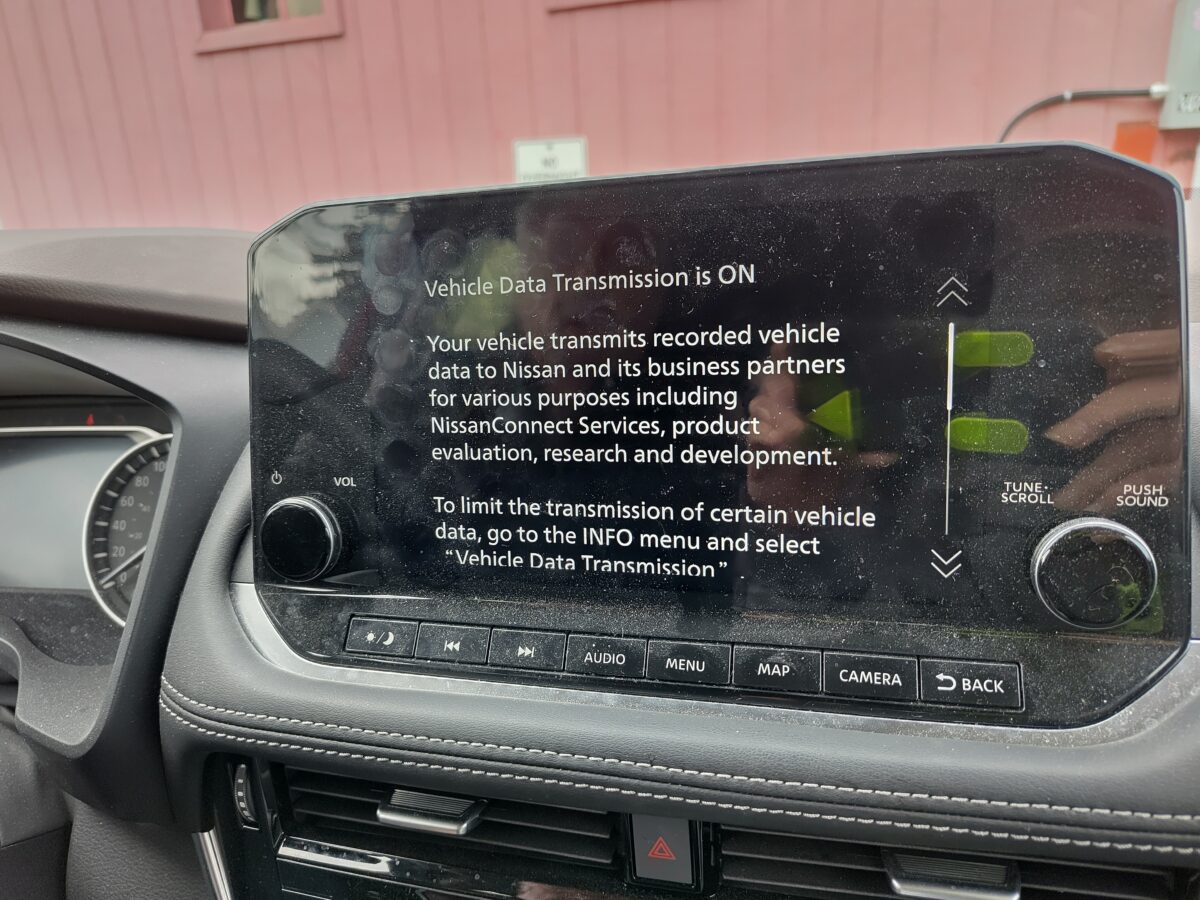

Most books on new technologies like CRISPR (for clustered regularly interspaced short palindromic repeats) are either all hype or all panic. In pulling together the collection of essays that make up The Promise and Peril of CRISPR, Baer has brought in voices rarely heard in discussions of new technologies. Ethan Weiss tells the story of his daughter, who was born with albinism, which has more difficult consequences than simple lack of pigmentation. Had the technology been available, he writes, they might have opted to correct the faulty gene that causes it; lacking that, they discovered new richness in life that they would never wish to give up. In another essay, Florence Ashley explores the potential impact from several directions on trans people, who might benefit from being able to alter their bodies through genetics rather than frequent medical interventions such as hormones. And in a third, Krystal Tsosie considers the impact on indigenous peoples, warning against allowing corporate ownership of DNA.

Other essays consider the potential for conditions such as cystic fibrosis (Sandra Sufian) and deafness (Carol Padden and Jacqueline Humphries), and international human rights. One use he omits, despite its status as intermittent media fodder since techniques for gene editing were first developed, is performance enhancement in sports. There is so far no imaginable way to test athletes for it. And anyone who’s watched junior sports knows there are definitely parents crazy enough to adopt any technology that will improve their kids’ abilities. Baer was smart to skip this; it will be a long time before CRISPR is cheap enough and advanced enough to be accessible for that sort of thing.

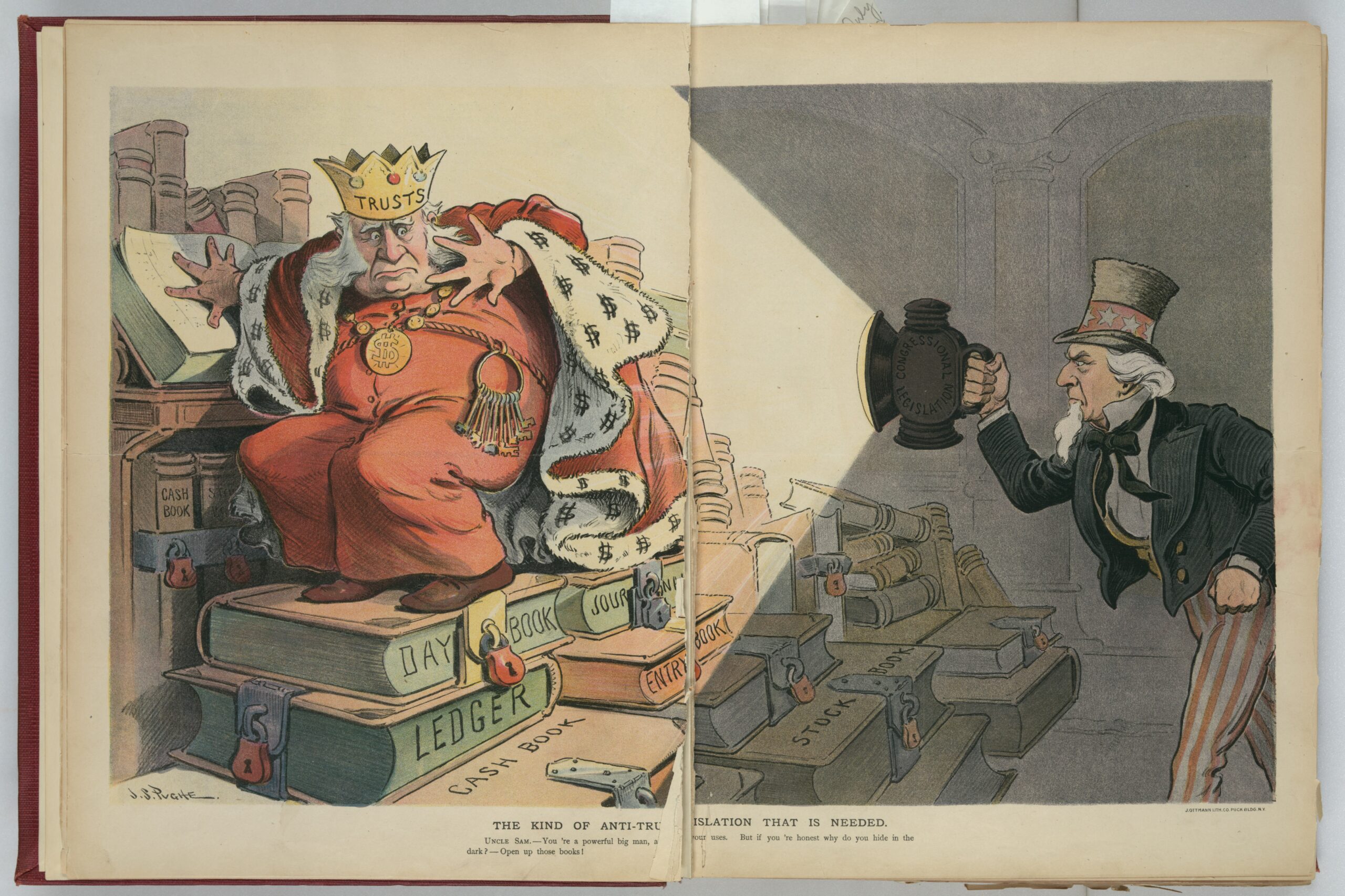

In one essay, molecular biologist Ellen D. Jorgenson discusses a class she co-designed to facilitate teaching CRISPR to anyone who cared to learn. At the time, the media were focused on its dangers, and she believed that teaching it would help alleviate public fear. Most uses, she writes, are benign. Based on previous experience with scientific advances, it will depend who wields it and for what purpose.