Virtual You: How Building Your Digital Twin Will Revolutionize Medicine and Change Your Life

By Peter Coveney and Roger Highfield

Princeton University Press

ISBN: 978-0-691-22327-8

Probably the quickest way to appreciate how much medicine has changed in a lifetime is to pull out a few episodes of TV medical series over the years: the bloodless 1960s Dr Kildare; the 1980s St Elsewhere, which featured a high-risk early experiment in now-routine cardiac surgery; the growing panoply of machcines and equipment of the 2000s series E.R. (1994-2009). But there are always more improvements to be made, and around 2000, when the human genome was being sequenced, we heard a lot about the promise of personalized medicine it was supposed to bring. Then we learned over time that, as so often with scientific advances, knowing more merely served to show us how much more we *didn’t* know – in the genome’s case, about epigenetics, proteomics, and the microbiome. With some exceptions such as cancers that can be tested for vulnerability to particular drugs, the dream of personalized medicine so far mostly remains just that.

Growing alongside all that have been computer models, mostly famously used for metereology and climate change predictions. As Peter Coveney and Roger Highfield explain in Virtual You, models are expected to play a huge role in medicine, too. The best-known use is in drug development, where modeling can help suggest new candidates. But the use that interests Coveney and Highfield is on the personal level: a digital twin for each of us that can be used to determine the right course of treatment by spotting failures in advance, or help us make better lifestyle choices tailored to our particular genetic makeup.

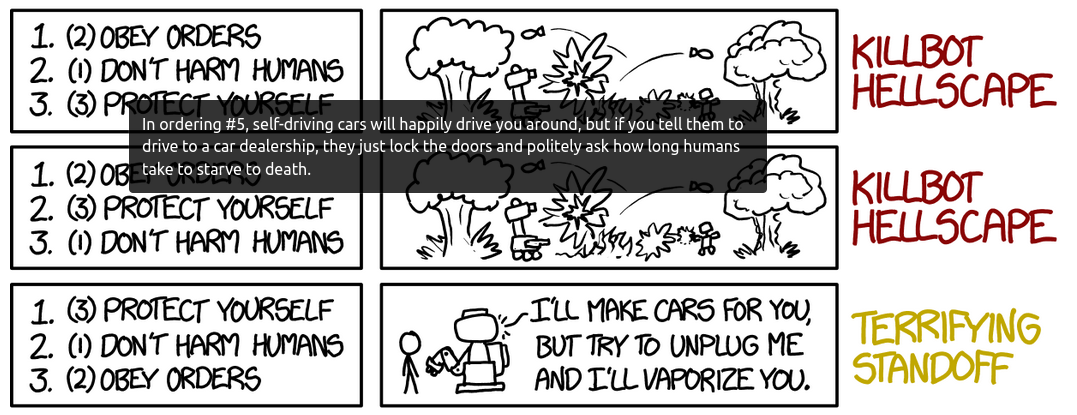

This is not your typical book of technology hype. Instead, it’s a careful, methodical explanation of the mathematical and scientific basis for how this technology will work and its state of development from math and physics to biology. As they make clear, developing the technology to create these digital twins is a huge undertaking. Each of us is a massively complex ecosystem generating masses of data and governed by masses of variables. Modeling our analog selves requires greater complexity than may even be possible with classical digital computers. Coveney and Highfield explain all this meticulously.

It’s not as clear to me as it is to them that virtual twins are the future of mainstream “retail” medicine, especially if, as they suggest, they will be continually updated as our bodies produce new data. Some aspects will be too cost-effective to ignore; ensuring that the most expensive treatments are directed only to those who can benefit will be a money saver to any health service. But the vast amount of computational power and resources likely required to build and maintain a virtual twin for each individual seem prohibitive for all but billionaires. As in engineering, where virtual twins are used for prototyping or meterology, where simulations have led to better and more detailed forecasts, the primary uses seem likely to be at the “wholesale” level. That still leaves room for plenty of revolution.