We’re now almost a year on from Rishi Sunak’s AI Summit, which failed to accomplish any of its most likely goals: cement his position as the UK’s prime minister; establish the UK as a world leader in AI fearmongering; or get him the new life in Silicon Valley some commentators seemed to think he wanted.

Arguably, however, it has raised belief that computer systems are “intelligent” – that is, that they understand what they’re calculating. The chatbots based on large language models make that worse, because, as James Boyle cleverly wrote, for the first time in human history, “sentences do not imply sentience”. Mix in paranoia over the state of the world and you get some truly terrifying systems being put into situations where they can catastrophically damage people’s lives. We should know better by now.

The Open Rights Group (I’m still on its advisory council) is campaigning against the Home Office’s planned eVisa scheme. In the previouslies: between 1948 and 1971, people from Caribbean countries, many of whom had fought for Britain in World War II, were encouraged to help the UK rebuild the economy post-war. They are known as the “Windrush generation” after the first ship that brought them. As Commonwealth citizens, they didn’t need visas or documentation; they and their children had the automatic right to live and work here.

Until 1973, when the law changed; later arrivals needed visas. The snag was that earlier arrivals had no idea they had any reason to worry….until the day they discovered, when challenged, that they had no way to prove they were living here legally. That day came in 2017, when then-prime minister, Theresa May (who this week joined the House of Lords) introduced the hostile environment. Intended to push illegal immigrants to go home, this law moves the “border” deep into British life by requiring landlords, banks, and others to conduct status checks. The result was that some of the Windrush group – again, legal residents – were refused medical care, denied housing, or deported.

When Brexit became real, millions of Europeans resident in the UK were shoved into the same position: arrived legally, needing no documentation, but in future required to prove their status. This time, the UK issued them documents confirming their status as permanently settled.

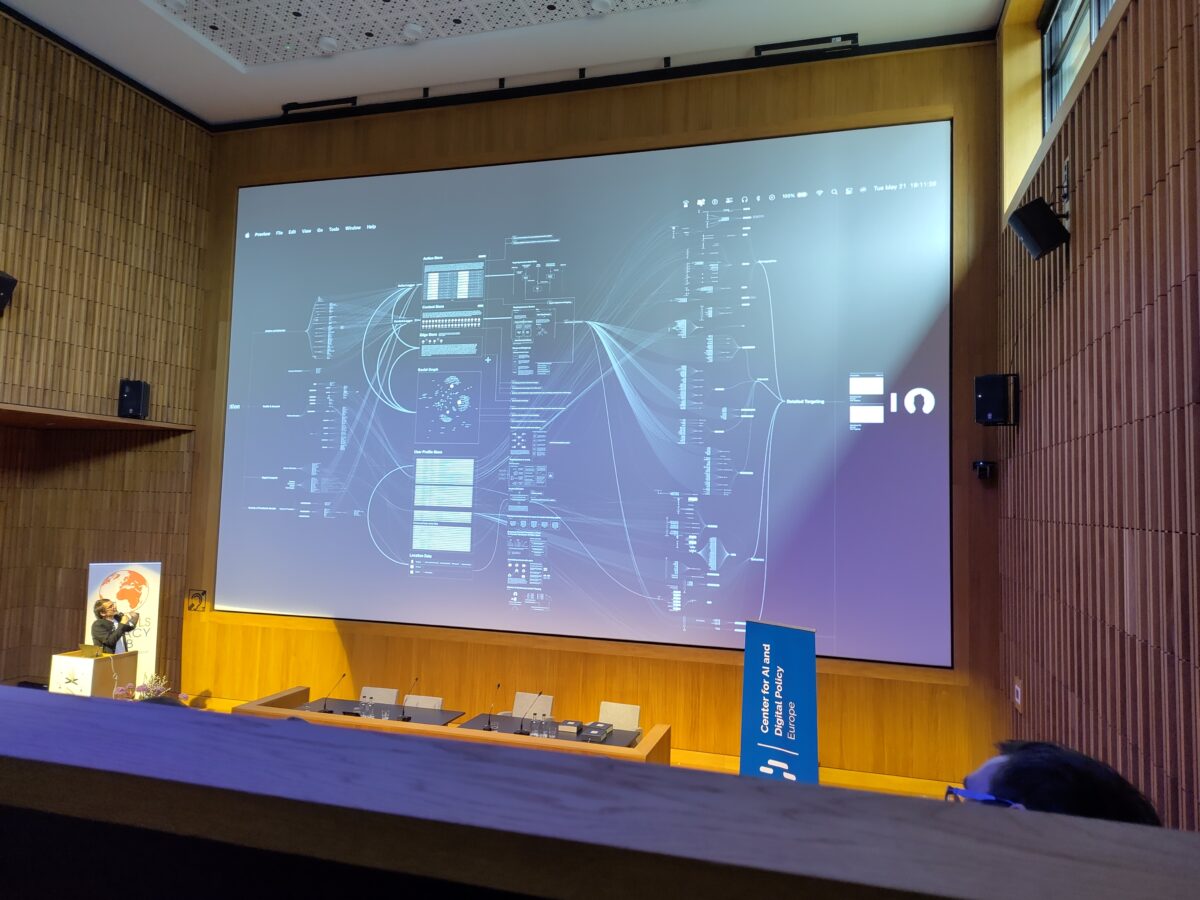

Until December 31, 2024, when all those documents with no expiration date will abruptly expire because the Home Office has a new system that is entirely online. As ORG and the3million explain it, come January 1, 2025, about 4 million people will need online accounts to access the new system, which generates a code to give the bank or landlord temporary access to their status. The new system will apparently hit a variety of databases in real time to perform live checks.

Now, I get that the UK government doesn’t want anyone to be in the country for one second longer than they’re entitled to. But we don’t even have to say, “What could possibly go wrong?” because we already *know* what *has* gone wrong for the Windrush generation. Anyone who has to prove their status off the cuff in time-sensitive situations really needs proof they can show when the system fails.

A proposal like this can only come from an irrational belief in the perfection – or at least, perfectability – of computer systems. It assumes that Internet connections won’t be interrupted, that databases will return accurate information, and that everyone involved will have the necessary devices and digital literacy to operate it. Even without ORG’s and the3million’s analysis, these are bonkers things to believe – and they are made worse by a helpline that is only available during the UK work day.

There is a lot of this kind of credulity about, most of it connected with “AI”. AP News reports that US police departments are beginning to use chatbots to generate crime reports based on the audio from their body cams. And, says Ars Technica, the US state of Nevada will let AI decide unemployment benefit claims, potentially producing denials that can’t be undone by a court. BrainFacts reports that decision makers using “AI” systems are prone to automation bias – that is, they trust the machine to be right. Of course, that’s just good job security: you won’t be fired for following the machine, but you might for overriding it.

The underlying risk with all these systems, as a security experts might say, is complexity: more complex means being more susceptible to inexplicable failures. There is very little to go wrong with a piece of paper that plainly states your status, for values of “paper” including paper, QR codes downloaded to phones, or PDFs saved to a desktop/laptop. Much can go wrong with the system underlying that “paper”, but, crucially, when a static confirmation is saved offline, managing that underlying complexity can take place when the need is not urgent.

It ought to go without saying that computer systems with a profound impact on people’s lives should be backed up by redundant systems that can be used when they fail. Yet the world the powers that be apparently want to build is one that underlines their power to cause enormous stress for everyone else. Systems like eVisas are as brittle as just-in-time supply chains. And we saw what happens to those during the emergency phase of the covid pandemic.

Illustrations: Empty supermarket shelves in March 2020 (via Wikimedia).

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon.