Dark Wire

by Joseph Cox

PublicAffairs (Hachette Group)

ISBNs: 9781541702691 (hardcover), 9781541702714 (ebook)

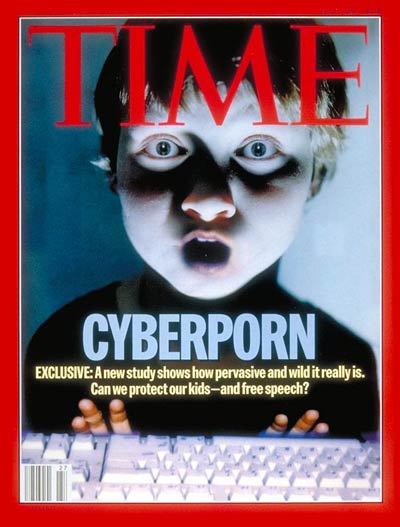

One of the basic principles that emerged as soon as encryption software became available to ordinary individuals on home computers was this: everyone should encrypt their email so the people who really need the protection don’t stick out as targets. Also at that same time, the authorities were constantly warning that if encryption weren’t controlled by key escrow, an implanted back door, or restrictions on its strength, it would help hide the activities of drug traffickers, organized crime, pedophiles, and terrorists. This same argument continues today.

Today, billions of people have access to encrypted messaging via WhatsApp, Signal, and other services. Governments still hate it, but they *use* it; the UK government is all over WhatsApp, as multiple public inquiries have shown.

In Dark Wire: The Incredible True Story of the Largest Sting Operation Ever, Joseph Cox, one of the four founders of 404 Media, takes us on a trip through law enforcement’s adventures in encryption, as police try to identify and track down serious criminals making and distributing illegal drugs by the ton.

The story begins with PhantomSecure, a scheme that stripped down Blackberry devices and installed PGP to encrypt emails and systems to ensure the devices could exchange emails only with other Phantom Secure devices. The service became popular among all sorts of celebrities, politicians, and other non-criminals who value privacy – but not *only* them. All perfectly legal.

One of my favorite moments comes early,when a criminal debating whether to trust a new contact decides he can because he has one of these secure Blackberries. The criminal trusted the supply chain; surely no one would have sold him one of these things without thoroughly checking that he wasn’t a cop. Spoiler alert: he was a cop. That sale helped said cop and his colleagues in the United States, Australia, Canada, and the Netherlands infiltrate the network, arrest a bunch of criminals, and shut it down – eventually, after setbacks, and with the non-US forces frustrated and amazed by US Constitutional law limiting what agents were allowed to look at.

PhantomSecure’s closure made a hole in the market while security-conscious criminals scrambled to find alternatives. It was rapidly filled by competitors working with modified phones: Encrochat and Sky ECC. As users migrated to these services and law enforcement worked to infiltrate and shut them down as well, former PhantomSecure salesman “Afgoo” had a bright idea, which he offered to the FBI: why not build their own encrypted app and take over the market?

The result was Anom, From the sounds of it, some of its features were quite cool. For example, the app itself hid behind an innocent-looking calculator, which acted as a password gateway. Type in the right code, and the messaging app appeared. The thing sold itself.

Of course, the FBI insisted on some modifications. Behind the scenes, Anom devices sent copies of every message to the FBI’s servers. Eventually, the floods of data the agencies harvested this way led to 500 arrests on one day alone, and the seizure of hundreds of firearms and dozens of tons of illegal drugs and precursor chemicals.

Some of the techniques the criminals use are fascinating in their own right. One method of in-person authentication involved using the unique serial number on a bank note, sending it in advance; the mule delivering the money would simply have to show they had the bank note, a physical one-time pad. Banks themselves were rarely used. Instead, cash would be stored in safe houses in various countries and the money would never have to cross borders. So: no records, no transfers to monitor. All of this spilled open for law enforcement because of Anom.

And yet. Cox waits until the end to voice reservations. All those seizures and arrests barely made a dent in the world’s drug trade – a “rounding error”, Cox calls it.