Invisible Rulers: The People Who Turn Lies Into Reality

by Renée DiResta

Hachette

ISBN: 978-1-54170337-7

For the last week, while violence has erupted in British cities, commentators asked, among other things: what has social media contributed to the inflammation? Often, the focus lands on specific famous people such as Elon Musk, who told exTwitter that the UK is heading for civil war (which basically shows he knows nothing about the UK).

It’s a particularly apt moment to read Renée DiResta‘s new book, Invisible Rulers: The People Who Turn Lies Into Reality. Until June, DiResta was the technical director of the Stanford Internet Observatory, which studies misinformation and disinformation online.

In her book, DiResta, like James Ball in The Other Pandemic and Naomi Klein in Doppelganger, traces how misinformation and disinformation propagate online. Where Ball examined his subject from the inside out (having spent his teenaged years on 4chan) and Klein is drawn from the outside in, DiResta’s study is structural. How do crowds work? What makes propaganda successful? Who drives engagement? What turns online engagement into real world violence?

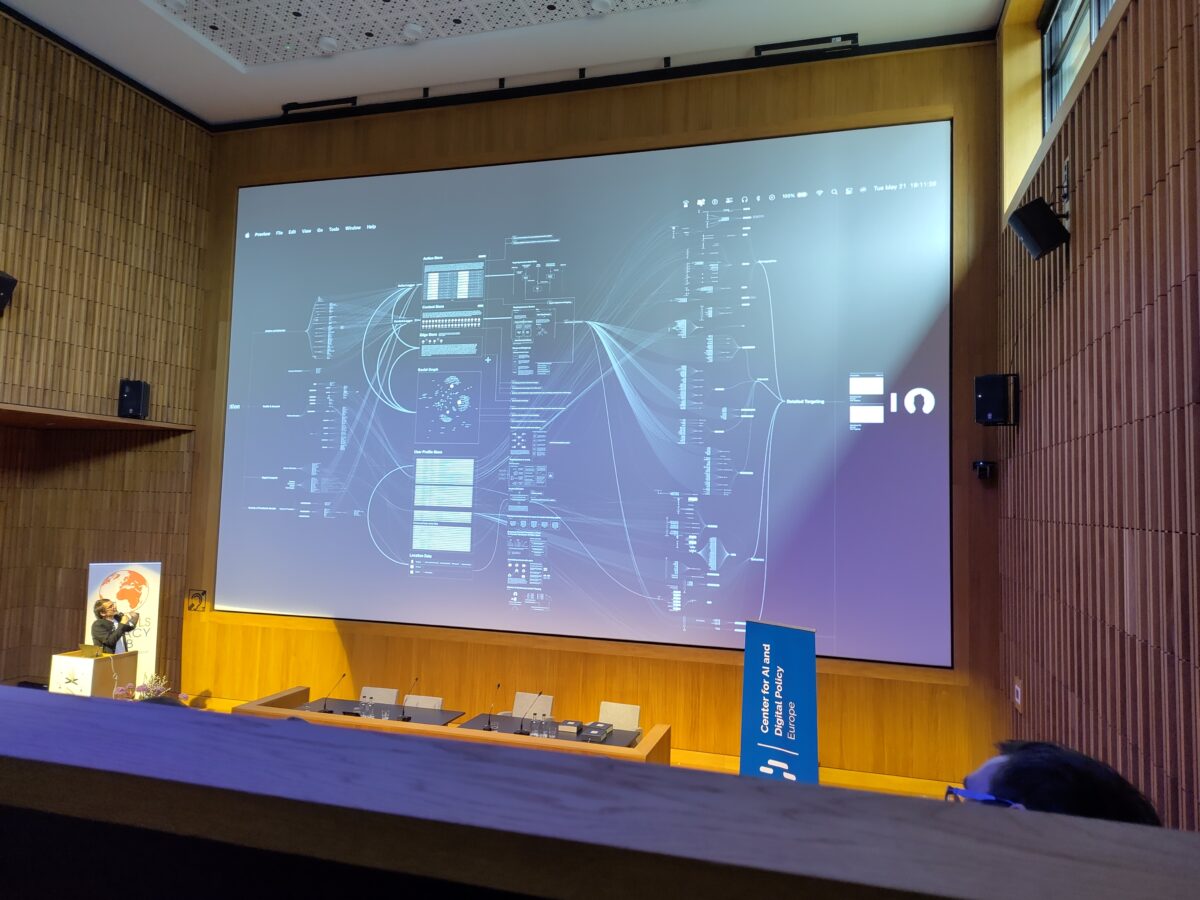

One reason these questions are difficult to answer is the lack of transparency regarding the money flowing to influencers, who may have audiences in the millions. The trust they build with their communities on one subject, like gardening or tennis statistics, extends to other topics when they stray. Someone making how-to knitting videos one day expresses concern about their community’s response to a new virus, finds engagement, and, eventually, through algorithmic boosting, greater profit in sticking to that topic instead. The result, she writes, is “bespoke realities” that are shaped by recommendation engines and emerge from competition among state actors, terrorists, ideologues, activists, and ordinary people. Then add generative AI: “We can now mass-produce unreality.”

DiResta’s work on this began in 2014, when she was checking vaccination rates in the preschools she was looking at for her year-old son in the light of rising rates of whooping cough in California. Why, she wondered, were there all these anti-vaccine groups on Facebook, and what went on in them? When she joined to find out, she discovered a nest of evangelists promoting lies to little opposition, a common pattern she calls “asymmetry of passion”. The campaign group she helped found succeeded in getting a change in the law, but she also saw that the future lay in online battlegrounds shaping public opinion. When she presented her discoveries to the Centers for Disease Control, however, they dismissed it as “just some people online”. This insouciance would, as she documents in a later chapter, come back to bite during the covid emergency, when the mechanisms already built whirred into action to discredit science and its institutions.

Asymmetry of passion makes those holding extreme opinions seem more numerous than they are. The addition of boosting algorithms and “charismatic leaders” such as Musk or Robert F. Kennedy, Jr (your mileage may vary) adds to this effect. DiResta does a good job of showing how shifts within groups – anti-vaxx groups that also fear chemtrails and embrace flat earth, flat earth groups that shift to QAnon – lead eventually from “asking questions” to “take action”. See also today’s UK.

Like most of us, DiResta is less clear on potential solutions. She gives some thought to the idea of prebunking, but more to requiring transparency: platforms around content moderation decisions, influencers around their payment for commercial and political speech, and governments around their engagement with social media platforms. She also recommends giving users better tools and introducing some friction to force a little more thought before posting.

The Observatory’s future is unclear, as several other key staff have left; Stanford told The Verge in June that the Observatory would continue under new leadership. It is just one of several election integrity monitors whose future is cloudy; in March Facebook announced it would shut down research tool CrowdTangle on August 14. DiResta’s book is an important part of its legacy.