To anyone remembering the excitement over DNA testing just a few years ago, this week’s news about 23andMe comes as a surprise. At CNN, Allison Morrow reports that all seven board members have resigned to protest CEO Anne Wojcicki’s plan to take the company private by buying up all the shares she doesn’t already own at 40 cents each (closing price yesterday was 0.3301. The board wanted her to find a buyer offering a better price.

In January, Rolfe Winkler reported at the Wall Street Journal ($) that 23andMe is likely to run out of cash by next year. Its market cap has dropped from $6 billion to under $200 million. He and Morrow catalogue the company’s problems: it’s never made a profit nor had a sustainable business model.

The reasons are fairly simple: few repeat customers. With DNA testing, as Winkler writes, “Customers only need to take the test once, and few test-takers get life-altering health results.” 23andMe’s mooted revolution in health care instead was a fad. Now, the company is pivoting to sell subscriptions to weight loss drugs.

This strikes me as an extraordinarily dangerous moment: the struggling company’s sole unique asset is a pile of more than 10 million DNA samples whose owners have agreed they can be used for research. Many were alarmed when, in December 2023, hackers broke into 1.7 million accounts and gained access to 6.9 million customer profiles<, though. The company said the hacked data did not include DNA records but did include family trees and other links. We don't think of 23andMe as a social network. But the same affordances that enabled Cambridge Analytica to leverage a relatively small number of user profiles to create a mass of data derived from a much larger number of their Friends worked on 23andMe. Given the way genetics works, this risk should have been obvious.

In 2004, the year of Facebook’s birth, the Australian privacy campaigner Roger Clarke warned in Very Black “Little Black Books” that social networks had no business model other than to abuse their users’ data. 23andMe’s terms and conditions promise to protect user privacy. But in a sale what happens to the data?

The same might be asked about the data that would accrue from Oracle CEO Larry Ellison‘s surveillance-embracing proposals this week. Inevitably, commentators invoked George Orwell’s 1984. At Business Insider, Kenneth Niemeyer was first to report: “[Ellison] said AI will usher in a new era of surveillance that he gleefully said will ensure ‘citizens will be on their best behavior.'”

The all-AI-surveillance all-the-time idea could only be embraced “gleefully” by someone who doesn’t believe it will affect him.

Niemeyer:

“Ellison said AI would be used in the future to constantly watch and analyze vast surveillance systems, like security cameras, police body cameras, doorbell cameras, and vehicle dashboard cameras.

“We’re going to have supervision,” Ellison said. “Every police officer is going to be supervised at all times, and if there’s a problem, AI will report that problem and report it to the appropriate person. Citizens will be on their best behavior because we are constantly recording and reporting everything that’s going on.”

Ellison is twenty-six years behind science fiction author David Brin, who proposed radical transparency in his 1998 non-fiction outing, The Transparent Society. But Brin saw reciprocity as an essential feature, believing it would protect privacy by making surveillance visible. Ellison is claiming that *inscrutable* surveillance will guarantee good behavior.

At 404 Media, Jason Koebler debunks Ellison point by point. Research and other evidence shows securing schools is unlikely to make them safer; body cameras don’t appear to improve police behavior; and all the technologies Ellison talks about have problems with accuracy and false positives. Indeed, the mayor of Chicago wants to end the city’s contract with ShotSpotter (now SoundThinking), saying it’s expensive and doesn’t cut crime; some research says it slows police 911 response. Worth noting Simon Spichak at Brain Facts, who finds with AI tools humans make worse decisions. So…not a good idea for police.

More disturbing is Koebler’s main point: most of the technology Ellison calls “future” is already here and failing to lower crime rates or solve its causes – while being very expensive. Ellison is already out of date.

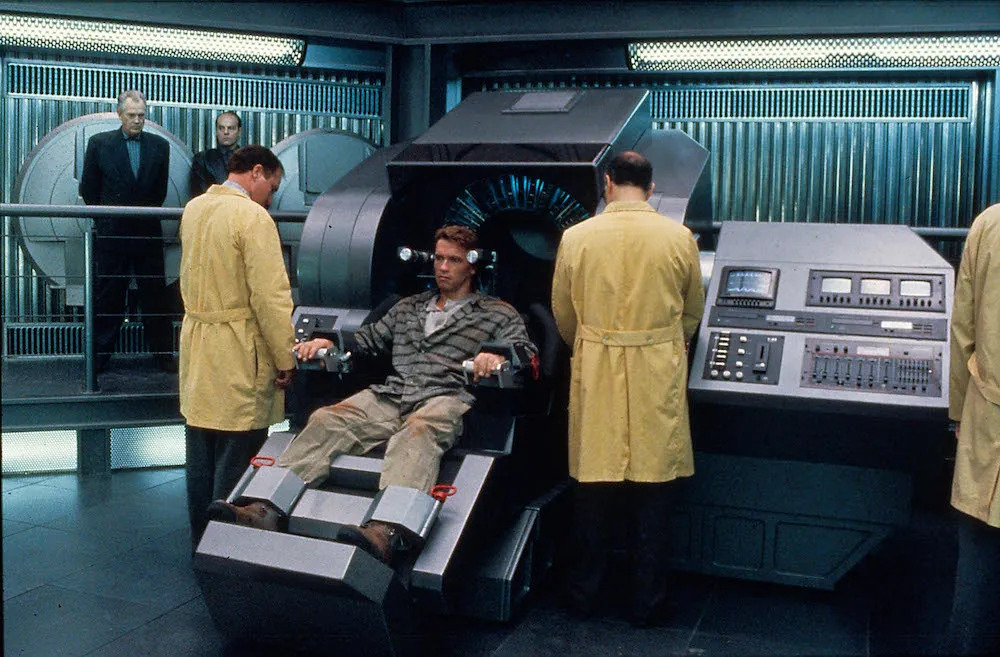

The book Ellison’s fantasy evokes for me is the less-known This Perfect Day, by Ira Levin, written in 1970. The novel’s world is run by a massive computer (“Unicomp”) that decides all aspects of individuals’ lives: their job, spouse, how many children they can have. Enforcing all this are human counselors and permanent electronic bracelets individuals touch to ubiquitous scanners for permission.

Homogeneity rules: everyone is mixed race, there are only four boys’ and girls’ names, they eat “totalcakes”, drink cokes, wear identical clothing. For the rest, regularly administered drugs keep everyone healthy and docile. “Fight” is an abominable curse word. The controlled world over which Unicomp presides is therefore almost entirely benign: there is no war, crime, and little disease. It rains only at night.

Naturally, the novel’s hero rebels, joins a group of outcasts (“the Incurables”), and finds his way to the secret underground luxury bunker where a few “Programmers” help Unicomp’s inventor, Wei Li Chun, run the world to his specification. So to me, Ellison’s plan is all about installing himself as world ruler. Which, I mean, who could object except other billionaires?

Illustrations: The CCTV camera on George Orwell’s Portobello Road house.

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. She is a contributing editor for the Plutopia News Network podcast. Follow on Mastodon.